spark:3.1.3-bin-hadoop3.2

tidb v6.1.0

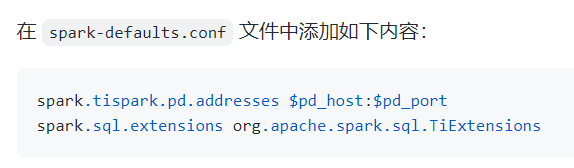

部署tispark过程中,执行到上图这一步的时候,spark运行就会报错

执行 spark-shell,报错如下:

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

22/07/31 08:37:16 ERROR Main: Failed to initialize Spark session.

java.lang.reflect.InvocationTargetException

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.spark.sql.SparkSession$.$anonfun$applyExtensions$1(SparkSession.scala:1192)

at org.apache.spark.sql.SparkSession$.$anonfun$applyExtensions$1$adapted(SparkSession.scala:1189)

at scala.collection.mutable.ResizableArray.foreach(ResizableArray.scala:62)

at scala.collection.mutable.ResizableArray.foreach$(ResizableArray.scala:55)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:49)

at org.apache.spark.sql.SparkSession$.org$apache$spark$sql$SparkSession$$applyExtensions(SparkSession.scala:1189)

at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:951)

at org.apache.spark.repl.Main$.createSparkSession(Main.scala:106)

at $line3.$read$$iw$$iw.<init>(<console>:15)

at $line3.$read$$iw.<init>(<console>:42)

at $line3.$read.<init>(<console>:44)

at $line3.$read$.<init>(<console>:48)

at $line3.$read$.<clinit>(<console>)

at $line3.$eval$.$print$lzycompute(<console>:7)

at $line3.$eval$.$print(<console>:6)

at $line3.$eval.$print(<console>)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at scala.tools.nsc.interpreter.IMain$ReadEvalPrint.call(IMain.scala:745)

at scala.tools.nsc.interpreter.IMain$Request.loadAndRun(IMain.scala:1021)

at scala.tools.nsc.interpreter.IMain.$anonfun$interpret$1(IMain.scala:574)

at scala.reflect.internal.util.ScalaClassLoader.asContext(ScalaClassLoader.scala:41)

at scala.reflect.internal.util.ScalaClassLoader.asContext$(ScalaClassLoader.scala:37)

at scala.reflect.internal.util.AbstractFileClassLoader.asContext(AbstractFileClassLoader.scala:41)

at scala.tools.nsc.interpreter.IMain.loadAndRunReq$1(IMain.scala:573)

at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:600)

at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:570)

at scala.tools.nsc.interpreter.IMain.$anonfun$quietRun$1(IMain.scala:224)

at scala.tools.nsc.interpreter.IMain.beQuietDuring(IMain.scala:214)

at scala.tools.nsc.interpreter.IMain.quietRun(IMain.scala:224)

at org.apache.spark.repl.SparkILoop.$anonfun$initializeSpark$2(SparkILoop.scala:83)

at scala.collection.immutable.List.foreach(List.scala:392)

at org.apache.spark.repl.SparkILoop.$anonfun$initializeSpark$1(SparkILoop.scala:83)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.tools.nsc.interpreter.ILoop.savingReplayStack(ILoop.scala:99)

at org.apache.spark.repl.SparkILoop.initializeSpark(SparkILoop.scala:83)

at org.apache.spark.repl.SparkILoop.$anonfun$process$4(SparkILoop.scala:165)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.tools.nsc.interpreter.ILoop.$anonfun$mumly$1(ILoop.scala:168)

at scala.tools.nsc.interpreter.IMain.beQuietDuring(IMain.scala:214)

at scala.tools.nsc.interpreter.ILoop.mumly(ILoop.scala:165)

at org.apache.spark.repl.SparkILoop.loopPostInit$1(SparkILoop.scala:153)

at org.apache.spark.repl.SparkILoop.$anonfun$process$10(SparkILoop.scala:221)

at org.apache.spark.repl.SparkILoop.withSuppressedSettings$1(SparkILoop.scala:189)

at org.apache.spark.repl.SparkILoop.startup$1(SparkILoop.scala:201)

at org.apache.spark.repl.SparkILoop.process(SparkILoop.scala:236)

at org.apache.spark.repl.Main$.doMain(Main.scala:78)

at org.apache.spark.repl.Main$.main(Main.scala:58)

at org.apache.spark.repl.Main.main(Main.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:951)

at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1039)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1048)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: java.lang.NoClassDefFoundError: scala/Function1$class

at org.apache.spark.sql.TiExtensions.<init>(TiExtensions.scala:23)

... 67 more

Caused by: java.lang.ClassNotFoundException: scala.Function1$class

at java.net.URLClassLoader.findClass(URLClassLoader.java:387)

at java.lang.ClassLoader.loadClass(ClassLoader.java:418)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:352)

at java.lang.ClassLoader.loadClass(ClassLoader.java:351)

... 68 more

求大佬指教