集群从4.0.0升级到4.0.9之后之reload过配置,没有重启。

本次重启就出现了问题。

报告pd启动失败了,可pd是up状态的。

stop后再start也是同样的。

[tidb@b16 ~]$ tiup cluster display test-cluster

Starting component `cluster`: /home/tidb/.tiup/components/cluster/v1.3.1/tiup-cluster display test-cluster

Cluster type: tidb

Cluster name: test-cluster

Cluster version: v4.0.9

SSH type: builtin

Dashboard URL: http://192.168.241.26:12379/dashboard

ID Role Host Ports OS/Arch Status Data Dir Deploy Dir

-- ---- ---- ----- ------- ------ -------- ----------

192.168.241.7:9093 alertmanager 192.168.241.7 9093/9094 linux/x86_64 inactive /home/tidb/deploy/data.alertmanager /home/tidb/deploy

192.168.241.7:3000 grafana 192.168.241.7 3000 linux/x86_64 inactive - /home/tidb/deploy

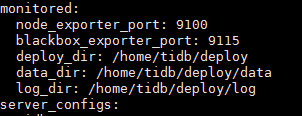

192.168.241.24:12379 pd 192.168.241.24 12379/12380 linux/x86_64 Up /disk1/pd/data.pd /disk1/pd

192.168.241.26:12379 pd 192.168.241.26 12379/12380 linux/x86_64 Up|UI /disk1/pd/data.pd /disk1/pd

192.168.241.49:12379 pd 192.168.241.49 12379/12380 linux/x86_64 Up|L /disk1/pd/data.pd /disk1/pd

192.168.241.7:9090 prometheus 192.168.241.7 9090 linux/x86_64 inactive /home/tidb/deploy/prometheus2.0.0.data.metrics /home/tidb/deploy

192.168.241.26:4000 tidb 192.168.241.26 4000/10080 linux/x86_64 Down - /disk1/pd

192.168.241.7:4000 tidb 192.168.241.7 4000/10080 linux/x86_64 Down - /home/tidb/deploy

192.168.241.11:20160 tikv 192.168.241.11 20160/20180 linux/x86_64 Disconnected /disk1/tikv/data /disk1/tikv

192.168.241.53:20160 tikv 192.168.241.53 20160/20180 linux/x86_64 Disconnected /disk2/tikv/data /disk2/tikv

192.168.241.56:20160 tikv 192.168.241.56 20160/20180 linux/x86_64 Disconnected /disk1/tikv/data /disk1/tikv

192.168.241.58:20160 tikv 192.168.241.58 20160/20180 linux/x86_64 Disconnected /disk1/tikv/data /disk1/tikv

Total nodes: 12

[tidb@b16 ~]$ tiup cluster start test-cluster

Starting component `cluster`: /home/tidb/.tiup/components/cluster/v1.3.1/tiup-cluster start test-cluster

Starting cluster test-cluster...

+ [ Serial ] - SSHKeySet: privateKey=/home/tidb/.tiup/storage/cluster/clusters/test-cluster/ssh/id_rsa, publicKey=/home/tidb/.tiup/storage/cluster/clusters/test-cluster/ssh/id_rsa.pub

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.7

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.24

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.26

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.11

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.56

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.7

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.26

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.58

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.53

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.7

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.49

+ [Parallel] - UserSSH: user=tidb, host=192.168.241.7

+ [ Serial ] - StartCluster

Starting component pd

Starting instance pd 192.168.241.26:12379

Starting instance pd 192.168.241.49:12379

Starting instance pd 192.168.241.24:12379

Start pd 192.168.241.49:12379 success

Start pd 192.168.241.26:12379 success

Start pd 192.168.241.24:12379 success

Starting component node_exporter

Starting instance 192.168.241.26

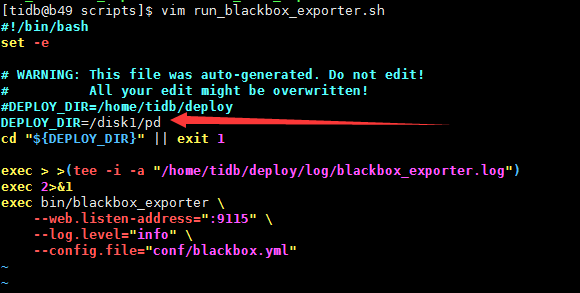

Error: failed to start: pd 192.168.241.26:12379, please check the instance's log(/disk1/pd/log) for more detail.: timed out waiting for port 9100 to be started after 2m0s

Verbose debug logs has been written to /home/tidb/.tiup/logs/tiup-cluster-debug-2021-01-13-15-42-43.log.

Error: run `/home/tidb/.tiup/components/cluster/v1.3.1/tiup-cluster` (wd:/home/tidb/.tiup/data/SLxR0Yk) failed: exit status 1

[root@b26 log]# tail -f pd.log

[2021/01/13 15:49:09.577 +08:00] [WARN] [proxy.go:181] ["fail to recv activity from remote, stay inactive and wait to next checking round"] [remote=192.168.241.7:4000] [interval=2s] [error="dial tcp 192.168.241.7:4000: connect: connection refused"]

[2021/01/13 15:49:09.577 +08:00] [WARN] [proxy.go:181] ["fail to recv activity from remote, stay inactive and wait to next checking round"] [remote=192.168.241.7:10080] [interval=2s] [error="dial tcp 192.168.241.7:10080: connect: connection refused"]

[2021/01/13 15:49:11.577 +08:00] [WARN] [proxy.go:181] ["fail to recv activity from remote, stay inactive and wait to next checking round"] [remote=192.168.241.26:4000] [interval=2s] [error="dial tcp 192.168.241.26:4000: connect: connection refused"]

[2021/01/13 15:49:11.577 +08:00] [WARN] [proxy.go:181] ["fail to recv activity from remote, stay inactive and wait to next checking round"] [remote=192.168.241.26:10080] [interval=2s] [error="dial tcp 192.168.241.26:10080: connect: connection refused"]

[2021/01/13 15:49:11.577 +08:00] [WARN] [proxy.go:181] ["fail to recv activity from remote, stay inactive and wait to next checking round"] [remote=192.168.241.7:4000] [interval=2s] [error="dial tcp 192.168.241.7:4000: connect: connection refused"]

[2021/01/13 15:49:11.577 +08:00] [WARN] [proxy.go:181] ["fail to recv activity from remote, stay inactive and wait to next checking round"] [remote=192.168.241.7:10080] [interval=2s] [error="dial tcp 192.168.241.7:10080: connect: connection refused"]

[2021/01/13 15:49:13.577 +08:00] [WARN] [proxy.go:181] ["fail to recv activity from remote, stay inactive and wait to next checking round"] [remote=192.168.241.26:10080] [interval=2s] [error="dial tcp 192.168.241.26:10080: connect: connection refused"]

[2021/01/13 15:49:13.577 +08:00] [WARN] [proxy.go:181] ["fail to recv activity from remote, stay inactive and wait to next checking round"] [remote=192.168.241.26:4000] [interval=2s] [error="dial tcp 192.168.241.26:4000: connect: connection refused"]

[2021/01/13 15:49:13.577 +08:00] [WARN] [proxy.go:181] ["fail to recv activity from remote, stay inactive and wait to next checking round"] [remote=192.168.241.7:4000] [interval=2s] [error="dial tcp 192.168.241.7:4000: connect: connection refused"]

[tidb@b16 ~]$ tiup cluster -v

Starting component `cluster`: /home/tidb/.tiup/components/cluster/v1.3.1/tiup-cluster -v

tiup version v1.3.1 tiup

Go Version: go1.13

Git Branch: release-1.3

GitHash: d51bd0c