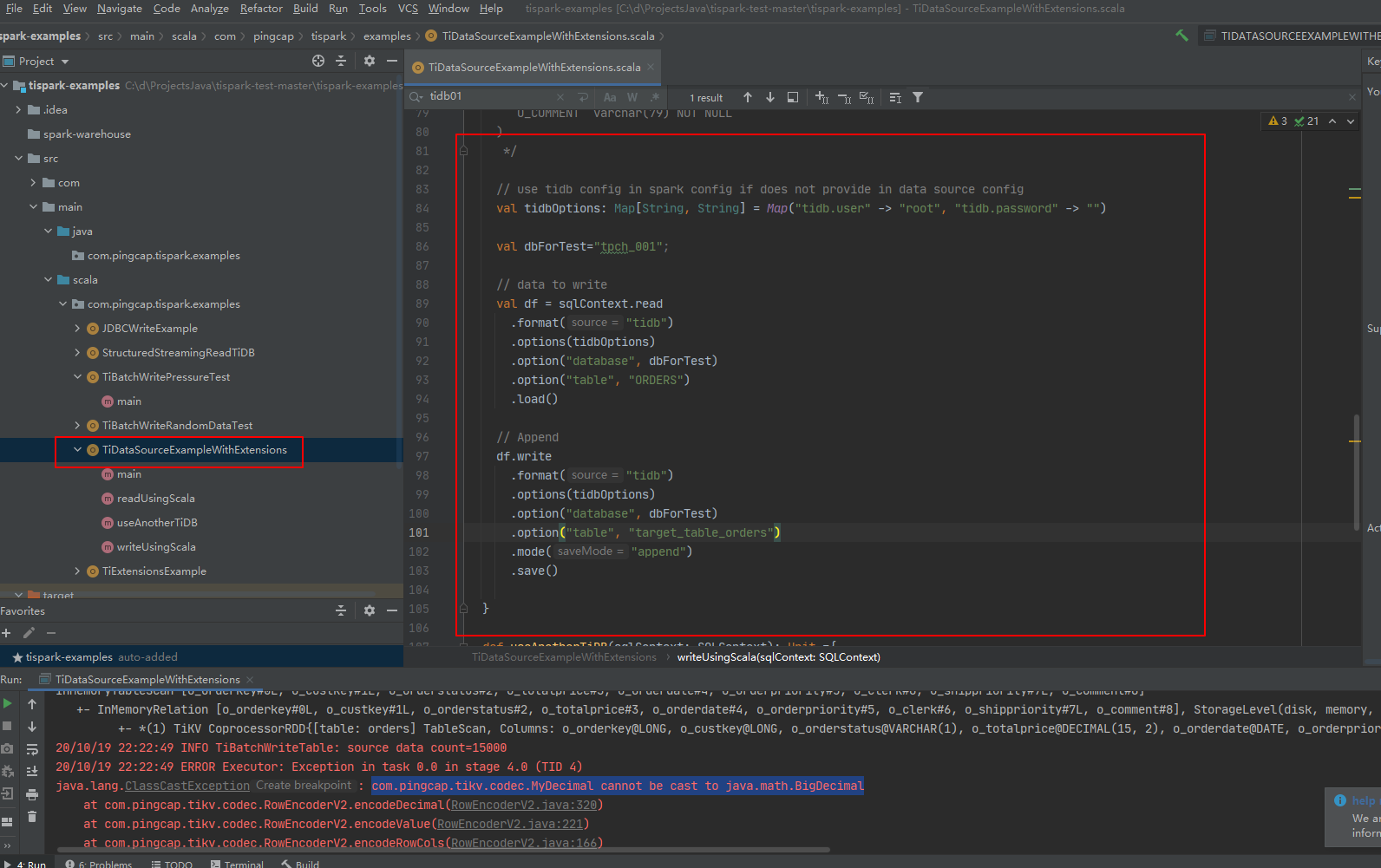

我从 这里 下的示例代码 https://github.com/pingcap/tispark-test ,

并根据 我自己的环境 改了一下 pom文件 和 代码中的 数据库名称 ,

在我 运行 TiDataSourceExampleWithExtensions ,这个类,readUsingScala 方法时 报 错,

请问这个是 代码需要额外配置,还是我的本机环境配置问题

D:\dev\jdk1.8.0_141\bin\java.exe “-javaagent:C:\Program Files\JetBrains\IntelliJ IDEA Community Edition 2020.2.3\lib\idea_rt.jar=1107:C:\Program Files\JetBrains\IntelliJ IDEA Community Edition 2020.2.3\bin” -Dfile.encoding=UTF-8 -classpath D:\dev\jdk1.8.0_141\jre\lib\charsets.jar;D:\dev\jdk1.8.0_141\jre\lib\deploy.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\access-bridge-64.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\cldrdata.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\dnsns.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\jaccess.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\jfxrt.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\localedata.jar;D:\dev\jdk1.8.0_141\jre\lib\ext

ashorn.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\sunec.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\sunjce_provider.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\sunmscapi.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\sunpkcs11.jar;D:\dev\jdk1.8.0_141\jre\lib\ext\zipfs.jar;D:\dev\jdk1.8.0_141\jre\lib\javaws.jar;D:\dev\jdk1.8.0_141\jre\lib\jce.jar;D:\dev\jdk1.8.0_141\jre\lib\jfr.jar;D:\dev\jdk1.8.0_141\jre\lib\jfxswt.jar;D:\dev\jdk1.8.0_141\jre\lib\jsse.jar;D:\dev\jdk1.8.0_141\jre\lib\management-agent.jar;D:\dev\jdk1.8.0_141\jre\lib\plugin.jar;D:\dev\jdk1.8.0_141\jre\lib\resources.jar;D:\dev\jdk1.8.0_141\jre\lib\rt.jar;C:\d\ProjectsJava\tispark-test-master\tispark-examples\target\classes;D:\dev\scala-2.11.12\lib\scala-actors-2.11.0.jar;D:\dev\scala-2.11.12\lib\scala-actors-migration_2.11-1.1.0.jar;D:\dev\scala-2.11.12\lib\scala-library.jar;D:\dev\scala-2.11.12\lib\scala-parser-combinators_2.11-1.0.4.jar;D:\dev\scala-2.11.12\lib\scala-reflect.jar;D:\dev\scala-2.11.12\lib\scala-swing_2.11-1.0.2.jar;D:\dev\scala-2.11.12\lib\scala-xml_2.11-1.0.5.jar;D:\dev\manvenrepos\org\apache\spark\spark-core_2.11\2.4.7\spark-core_2.11-2.4.7.jar;D:\dev\manvenrepos\com\thoughtworks\paranamer\paranamer\2.8\paranamer-2.8.jar;D:\dev\manvenrepos\org\apache\avro\avro\1.8.2\avro-1.8.2.jar;D:\dev\manvenrepos\org\codehaus\jackson\jackson-core-asl\1.9.13\jackson-core-asl-1.9.13.jar;D:\dev\manvenrepos\org\codehaus\jackson\jackson-mapper-asl\1.9.13\jackson-mapper-asl-1.9.13.jar;D:\dev\manvenrepos\org\apache\commons\commons-compress\1.8.1\commons-compress-1.8.1.jar;D:\dev\manvenrepos\org\tukaani\xz\1.5\xz-1.5.jar;D:\dev\manvenrepos\org\apache\avro\avro-mapred\1.8.2\avro-mapred-1.8.2-hadoop2.jar;D:\dev\manvenrepos\org\apache\avro\avro-ipc\1.8.2\avro-ipc-1.8.2.jar;D:\dev\manvenrepos\commons-codec\commons-codec\1.9\commons-codec-1.9.jar;D:\dev\manvenrepos\com\twitter\chill_2.11\0.9.3\chill_2.11-0.9.3.jar;D:\dev\manvenrepos\com\esotericsoftware\kryo-shaded\4.0.2\kryo-shaded-4.0.2.jar;D:\dev\manvenrepos\com\esotericsoftware\minlog\1.3.0\minlog-1.3.0.jar;D:\dev\manvenrepos\org\objenesis\objenesis\2.5.1\objenesis-2.5.1.jar;D:\dev\manvenrepos\com\twitter\chill-java\0.9.3\chill-java-0.9.3.jar;D:\dev\manvenrepos\org\apache\xbean\xbean-asm6-shaded\4.8\xbean-asm6-shaded-4.8.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-client\2.6.5\hadoop-client-2.6.5.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-common\2.6.5\hadoop-common-2.6.5.jar;D:\dev\manvenrepos\commons-cli\commons-cli\1.2\commons-cli-1.2.jar;D:\dev\manvenrepos\xmlenc\xmlenc\0.52\xmlenc-0.52.jar;D:\dev\manvenrepos\commons-httpclient\commons-httpclient\3.1\commons-httpclient-3.1.jar;D:\dev\manvenrepos\commons-io\commons-io\2.4\commons-io-2.4.jar;D:\dev\manvenrepos\commons-collections\commons-collections\3.2.2\commons-collections-3.2.2.jar;D:\dev\manvenrepos\commons-configuration\commons-configuration\1.6\commons-configuration-1.6.jar;D:\dev\manvenrepos\commons-digester\commons-digester\1.8\commons-digester-1.8.jar;D:\dev\manvenrepos\commons-beanutils\commons-beanutils\1.7.0\commons-beanutils-1.7.0.jar;D:\dev\manvenrepos\com\google\code\gson\gson\2.2.4\gson-2.2.4.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-auth\2.6.5\hadoop-auth-2.6.5.jar;D:\dev\manvenrepos\org\apache\directory\server\apacheds-kerberos-codec\2.0.0-M15\apacheds-kerberos-codec-2.0.0-M15.jar;D:\dev\manvenrepos\org\apache\directory\server\apacheds-i18n\2.0.0-M15\apacheds-i18n-2.0.0-M15.jar;D:\dev\manvenrepos\org\apache\directory\api\api-asn1-api\1.0.0-M20\api-asn1-api-1.0.0-M20.jar;D:\dev\manvenrepos\org\apache\directory\api\api-util\1.0.0-M20\api-util-1.0.0-M20.jar;D:\dev\manvenrepos\org\apache\curator\curator-client\2.6.0\curator-client-2.6.0.jar;D:\dev\manvenrepos\org\htrace\htrace-core\3.0.4\htrace-core-3.0.4.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-hdfs\2.6.5\hadoop-hdfs-2.6.5.jar;D:\dev\manvenrepos\org\mortbay\jetty\jetty-util\6.1.26\jetty-util-6.1.26.jar;D:\dev\manvenrepos\xerces\xercesImpl\2.9.1\xercesImpl-2.9.1.jar;D:\dev\manvenrepos\xml-apis\xml-apis\1.3.04\xml-apis-1.3.04.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-mapreduce-client-app\2.6.5\hadoop-mapreduce-client-app-2.6.5.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-mapreduce-client-common\2.6.5\hadoop-mapreduce-client-common-2.6.5.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-yarn-client\2.6.5\hadoop-yarn-client-2.6.5.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-yarn-server-common\2.6.5\hadoop-yarn-server-common-2.6.5.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-mapreduce-client-shuffle\2.6.5\hadoop-mapreduce-client-shuffle-2.6.5.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-yarn-api\2.6.5\hadoop-yarn-api-2.6.5.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-mapreduce-client-core\2.6.5\hadoop-mapreduce-client-core-2.6.5.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-yarn-common\2.6.5\hadoop-yarn-common-2.6.5.jar;D:\dev\manvenrepos\javax\xml\bind\jaxb-api\2.2.2\jaxb-api-2.2.2.jar;D:\dev\manvenrepos\javax\xml\stream\stax-api\1.0-2\stax-api-1.0-2.jar;D:\dev\manvenrepos\org\codehaus\jackson\jackson-jaxrs\1.9.13\jackson-jaxrs-1.9.13.jar;D:\dev\manvenrepos\org\codehaus\jackson\jackson-xc\1.9.13\jackson-xc-1.9.13.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-mapreduce-client-jobclient\2.6.5\hadoop-mapreduce-client-jobclient-2.6.5.jar;D:\dev\manvenrepos\org\apache\hadoop\hadoop-annotations\2.6.5\hadoop-annotations-2.6.5.jar;D:\dev\manvenrepos\org\apache\spark\spark-launcher_2.11\2.4.7\spark-launcher_2.11-2.4.7.jar;D:\dev\manvenrepos\org\apache\spark\spark-kvstore_2.11\2.4.7\spark-kvstore_2.11-2.4.7.jar;D:\dev\manvenrepos\org\fusesource\leveldbjni\leveldbjni-all\1.8\leveldbjni-all-1.8.jar;D:\dev\manvenrepos\com\fasterxml\jackson\core\jackson-core\2.6.7\jackson-core-2.6.7.jar;D:\dev\manvenrepos\com\fasterxml\jackson\core\jackson-annotations\2.6.7\jackson-annotations-2.6.7.jar;D:\dev\manvenrepos\org\apache\spark\spark-network-common_2.11\2.4.7\spark-network-common_2.11-2.4.7.jar;D:\dev\manvenrepos\org\apache\spark\spark-network-shuffle_2.11\2.4.7\spark-network-shuffle_2.11-2.4.7.jar;D:\dev\manvenrepos\org\apache\spark\spark-unsafe_2.11\2.4.7\spark-unsafe_2.11-2.4.7.jar;D:\dev\manvenrepos\javax\activation\activation\1.1.1\activation-1.1.1.jar;D:\dev\manvenrepos\org\apache\curator\curator-recipes\2.6.0\curator-recipes-2.6.0.jar;D:\dev\manvenrepos\org\apache\curator\curator-framework\2.6.0\curator-framework-2.6.0.jar;D:\dev\manvenrepos\com\google\guava\guava\16.0.1\guava-16.0.1.jar;D:\dev\manvenrepos\org\apache\zookeeper\zookeeper\3.4.6\zookeeper-3.4.6.jar;D:\dev\manvenrepos\javax\servlet\javax.servlet-api\3.1.0\javax.servlet-api-3.1.0.jar;D:\dev\manvenrepos\org\apache\commons\commons-lang3\3.5\commons-lang3-3.5.jar;D:\dev\manvenrepos\org\apache\commons\commons-math3\3.4.1\commons-math3-3.4.1.jar;D:\dev\manvenrepos\com\google\code\findbugs\jsr305\1.3.9\jsr305-1.3.9.jar;D:\dev\manvenrepos\org\slf4j\slf4j-api\1.7.16\slf4j-api-1.7.16.jar;D:\dev\manvenrepos\org\slf4j\jul-to-slf4j\1.7.16\jul-to-slf4j-1.7.16.jar;D:\dev\manvenrepos\org\slf4j\jcl-over-slf4j\1.7.16\jcl-over-slf4j-1.7.16.jar;D:\dev\manvenrepos\log4j\log4j\1.2.17\log4j-1.2.17.jar;D:\dev\manvenrepos\org\slf4j\slf4j-log4j12\1.7.16\slf4j-log4j12-1.7.16.jar;D:\dev\manvenrepos\com

ing\compress-lzf\1.0.3\compress-lzf-1.0.3.jar;D:\dev\manvenrepos\org\xerial\snappy\snappy-java\1.1.7.5\snappy-java-1.1.7.5.jar;D:\dev\manvenrepos\org\lz4\lz4-java\1.4.0\lz4-java-1.4.0.jar;D:\dev\manvenrepos\com\github\luben\zstd-jni\1.3.2-2\zstd-jni-1.3.2-2.jar;D:\dev\manvenrepos\org\roaringbitmap\RoaringBitmap\0.7.45\RoaringBitmap-0.7.45.jar;D:\dev\manvenrepos\org\roaringbitmap\shims\0.7.45\shims-0.7.45.jar;D:\dev\manvenrepos\commons-net\commons-net\3.1\commons-net-3.1.jar;D:\dev\manvenrepos\org\scala-lang\scala-library\2.11.12\scala-library-2.11.12.jar;D:\dev\manvenrepos\org\json4s\json4s-jackson_2.11\3.5.3\json4s-jackson_2.11-3.5.3.jar;D:\dev\manvenrepos\org\json4s\json4s-core_2.11\3.5.3\json4s-core_2.11-3.5.3.jar;D:\dev\manvenrepos\org\json4s\json4s-ast_2.11\3.5.3\json4s-ast_2.11-3.5.3.jar;D:\dev\manvenrepos\org\json4s\json4s-scalap_2.11\3.5.3\json4s-scalap_2.11-3.5.3.jar;D:\dev\manvenrepos\org\scala-lang\modules\scala-xml_2.11\1.0.6\scala-xml_2.11-1.0.6.jar;D:\dev\manvenrepos\org\glassfish\jersey\core\jersey-client\2.22.2\jersey-client-2.22.2.jar;D:\dev\manvenrepos\javax\ws\rs\javax.ws.rs-api\2.0.1\javax.ws.rs-api-2.0.1.jar;D:\dev\manvenrepos\org\glassfish\hk2\hk2-api\2.4.0-b34\hk2-api-2.4.0-b34.jar;D:\dev\manvenrepos\org\glassfish\hk2\hk2-utils\2.4.0-b34\hk2-utils-2.4.0-b34.jar;D:\dev\manvenrepos\org\glassfish\hk2\external\aopalliance-repackaged\2.4.0-b34\aopalliance-repackaged-2.4.0-b34.jar;D:\dev\manvenrepos\org\glassfish\hk2\external\javax.inject\2.4.0-b34\javax.inject-2.4.0-b34.jar;D:\dev\manvenrepos\org\glassfish\hk2\hk2-locator\2.4.0-b34\hk2-locator-2.4.0-b34.jar;D:\dev\manvenrepos\org\javassist\javassist\3.18.1-GA\javassist-3.18.1-GA.jar;D:\dev\manvenrepos\org\glassfish\jersey\core\jersey-common\2.22.2\jersey-common-2.22.2.jar;D:\dev\manvenrepos\javax\annotation\javax.annotation-api\1.2\javax.annotation-api-1.2.jar;D:\dev\manvenrepos\org\glassfish\jersey\bundles\repackaged\jersey-guava\2.22.2\jersey-guava-2.22.2.jar;D:\dev\manvenrepos\org\glassfish\hk2\osgi-resource-locator\1.0.1\osgi-resource-locator-1.0.1.jar;D:\dev\manvenrepos\org\glassfish\jersey\core\jersey-server\2.22.2\jersey-server-2.22.2.jar;D:\dev\manvenrepos\org\glassfish\jersey\media\jersey-media-jaxb\2.22.2\jersey-media-jaxb-2.22.2.jar;D:\dev\manvenrepos\javax\validation\validation-api\1.1.0.Final\validation-api-1.1.0.Final.jar;D:\dev\manvenrepos\org\glassfish\jersey\containers\jersey-container-servlet\2.22.2\jersey-container-servlet-2.22.2.jar;D:\dev\manvenrepos\org\glassfish\jersey\containers\jersey-container-servlet-core\2.22.2\jersey-container-servlet-core-2.22.2.jar;D:\dev\manvenrepos\io

etty

etty-all\4.1.47.Final

etty-all-4.1.47.Final.jar;D:\dev\manvenrepos\io

etty

etty\3.9.9.Final

etty-3.9.9.Final.jar;D:\dev\manvenrepos\com\clearspring\analytics\stream\2.7.0\stream-2.7.0.jar;D:\dev\manvenrepos\io\dropwizard\metrics\metrics-core\3.1.5\metrics-core-3.1.5.jar;D:\dev\manvenrepos\io\dropwizard\metrics\metrics-jvm\3.1.5\metrics-jvm-3.1.5.jar;D:\dev\manvenrepos\io\dropwizard\metrics\metrics-json\3.1.5\metrics-json-3.1.5.jar;D:\dev\manvenrepos\io\dropwizard\metrics\metrics-graphite\3.1.5\metrics-graphite-3.1.5.jar;D:\dev\manvenrepos\com\fasterxml\jackson\core\jackson-databind\2.6.7.3\jackson-databind-2.6.7.3.jar;D:\dev\manvenrepos\com\fasterxml\jackson\module\jackson-module-scala_2.11\2.6.7.1\jackson-module-scala_2.11-2.6.7.1.jar;D:\dev\manvenrepos\org\scala-lang\scala-reflect\2.11.8\scala-reflect-2.11.8.jar;D:\dev\manvenrepos\com\fasterxml\jackson\module\jackson-module-paranamer\2.7.9\jackson-module-paranamer-2.7.9.jar;D:\dev\manvenrepos\org\apache\ivy\ivy\2.4.0\ivy-2.4.0.jar;D:\dev\manvenrepos\oro\oro\2.0.8\oro-2.0.8.jar;D:\dev\manvenrepos

et\razorvine\pyrolite\4.13\pyrolite-4.13.jar;D:\dev\manvenrepos

et\sf\py4j\py4j\0.10.7\py4j-0.10.7.jar;D:\dev\manvenrepos\org\apache\spark\spark-tags_2.11\2.4.7\spark-tags_2.11-2.4.7.jar;D:\dev\manvenrepos\org\apache\commons\commons-crypto\1.0.0\commons-crypto-1.0.0.jar;D:\dev\manvenrepos\org\spark-project\spark\unused\1.0.0\unused-1.0.0.jar;D:\dev\manvenrepos\org\apache\spark\spark-sql_2.11\2.4.7\spark-sql_2.11-2.4.7.jar;D:\dev\manvenrepos\com\univocity\univocity-parsers\2.7.3\univocity-parsers-2.7.3.jar;D:\dev\manvenrepos\org\apache\spark\spark-sketch_2.11\2.4.7\spark-sketch_2.11-2.4.7.jar;D:\dev\manvenrepos\org\apache\spark\spark-catalyst_2.11\2.4.7\spark-catalyst_2.11-2.4.7.jar;D:\dev\manvenrepos\org\scala-lang\modules\scala-parser-combinators_2.11\1.1.0\scala-parser-combinators_2.11-1.1.0.jar;D:\dev\manvenrepos\org\codehaus\janino\janino\3.0.16\janino-3.0.16.jar;D:\dev\manvenrepos\org\codehaus\janino\commons-compiler\3.0.16\commons-compiler-3.0.16.jar;D:\dev\manvenrepos\org\antlr\antlr4-runtime\4.7\antlr4-runtime-4.7.jar;D:\dev\manvenrepos\org\apache\orc\orc-core\1.5.5\orc-core-1.5.5-nohive.jar;D:\dev\manvenrepos\org\apache\orc\orc-shims\1.5.5\orc-shims-1.5.5.jar;D:\dev\manvenrepos\com\google\protobuf\protobuf-java\2.5.0\protobuf-java-2.5.0.jar;D:\dev\manvenrepos\commons-lang\commons-lang\2.6\commons-lang-2.6.jar;D:\dev\manvenrepos\io\airlift\aircompressor\0.10\aircompressor-0.10.jar;D:\dev\manvenrepos\org\apache\orc\orc-mapreduce\1.5.5\orc-mapreduce-1.5.5-nohive.jar;D:\dev\manvenrepos\org\apache\parquet\parquet-column\1.10.1\parquet-column-1.10.1.jar;D:\dev\manvenrepos\org\apache\parquet\parquet-common\1.10.1\parquet-common-1.10.1.jar;D:\dev\manvenrepos\org\apache\parquet\parquet-encoding\1.10.1\parquet-encoding-1.10.1.jar;D:\dev\manvenrepos\org\apache\parquet\parquet-hadoop\1.10.1\parquet-hadoop-1.10.1.jar;D:\dev\manvenrepos\org\apache\parquet\parquet-format\2.4.0\parquet-format-2.4.0.jar;D:\dev\manvenrepos\org\apache\parquet\parquet-jackson\1.10.1\parquet-jackson-1.10.1.jar;D:\dev\manvenrepos\org\apache\arrow\arrow-vector\0.10.0\arrow-vector-0.10.0.jar;D:\dev\manvenrepos\org\apache\arrow\arrow-format\0.10.0\arrow-format-0.10.0.jar;D:\dev\manvenrepos\org\apache\arrow\arrow-memory\0.10.0\arrow-memory-0.10.0.jar;D:\dev\manvenrepos\joda-time\joda-time\2.9.9\joda-time-2.9.9.jar;D:\dev\manvenrepos\com\carrotsearch\hppc\0.7.2\hppc-0.7.2.jar;D:\dev\manvenrepos\com\vlkan\flatbuffers\1.2.0-3f79e055\flatbuffers-1.2.0-3f79e055.jar;D:\dev\manvenrepos\mysql\mysql-connector-java\5.1.44\mysql-connector-java-5.1.44.jar;D:\dev\manvenrepos\com\pingcap\tispark\tispark-assembly\2.3.4\tispark-assembly-2.3.4.jar;D:\dev\manvenrepos\com\pingcap\tispark\tispark-core-internal\2.3.4\tispark-core-internal-2.3.4.jar;D:\dev\manvenrepos\org\scalaj\scalaj-http_2.11\2.3.0\scalaj-http_2.11-2.3.0.jar;D:\dev\manvenrepos\org\apache\logging\log4j\log4j-api\2.8.1\log4j-api-2.8.1.jar;D:\dev\manvenrepos\org\apache\logging\log4j\log4j-core\2.13.2\log4j-core-2.13.2.jar;D:\dev\manvenrepos\org\apache\spark\spark-hive_2.11\2.3.4\spark-hive_2.11-2.3.4.jar;D:\dev\manvenrepos\com\twitter\parquet-hadoop-bundle\1.6.0\parquet-hadoop-bundle-1.6.0.jar;D:\dev\manvenrepos\org\spark-project\hive\hive-exec\1.2.1.spark2\hive-exec-1.2.1.spark2.jar;D:\dev\manvenrepos\javolution\javolution\5.5.1\javolution-5.5.1.jar;D:\dev\manvenrepos\log4j\apache-log4j-extras\1.2.17\apache-log4j-extras-1.2.17.jar;D:\dev\manvenrepos\org\antlr\antlr-runtime\3.4\antlr-runtime-3.4.jar;D:\dev\manvenrepos\org\antlr\stringtemplate\3.2.1\stringtemplate-3.2.1.jar;D:\dev\manvenrepos\antlr\antlr\2.7.7\antlr-2.7.7.jar;D:\dev\manvenrepos\org\antlr\ST4\4.0.4\ST4-4.0.4.jar;D:\dev\manvenrepos\com\googlecode\javaewah\JavaEWAH\0.3.2\JavaEWAH-0.3.2.jar;D:\dev\manvenrepos\org\iq80\snappy\snappy\0.2\snappy-0.2.jar;D:\dev\manvenrepos\stax\stax-api\1.0.1\stax-api-1.0.1.jar;D:\dev\manvenrepos

et\sf\opencsv\opencsv\2.3\opencsv-2.3.jar;D:\dev\manvenrepos\org\spark-project\hive\hive-metastore\1.2.1.spark2\hive-metastore-1.2.1.spark2.jar;D:\dev\manvenrepos\com\jolbox\bonecp\0.8.0.RELEASE\bonecp-0.8.0.RELEASE.jar;D:\dev\manvenrepos\commons-logging\commons-logging\1.1.3\commons-logging-1.1.3.jar;D:\dev\manvenrepos\org\datanucleus\datanucleus-api-jdo\3.2.6\datanucleus-api-jdo-3.2.6.jar;D:\dev\manvenrepos\org\datanucleus\datanucleus-rdbms\3.2.9\datanucleus-rdbms-3.2.9.jar;D:\dev\manvenrepos\commons-pool\commons-pool\1.5.4\commons-pool-1.5.4.jar;D:\dev\manvenrepos\commons-dbcp\commons-dbcp\1.4\commons-dbcp-1.4.jar;D:\dev\manvenrepos\javax\jdo\jdo-api\3.0.1\jdo-api-3.0.1.jar;D:\dev\manvenrepos\javax\transaction\jta\1.1\jta-1.1.jar;D:\dev\manvenrepos\org\apache\calcite\calcite-avatica\1.2.0-incubating\calcite-avatica-1.2.0-incubating.jar;D:\dev\manvenrepos\org\apache\calcite\calcite-core\1.2.0-incubating\calcite-core-1.2.0-incubating.jar;D:\dev\manvenrepos\org\apache\calcite\calcite-linq4j\1.2.0-incubating\calcite-linq4j-1.2.0-incubating.jar;D:\dev\manvenrepos

et\hydromatic\eigenbase-properties\1.1.5\eigenbase-properties-1.1.5.jar;D:\dev\manvenrepos\org\apache\httpcomponents\httpclient\4.5.4\httpclient-4.5.4.jar;D:\dev\manvenrepos\org\apache\httpcomponents\httpcore\4.4.7\httpcore-4.4.7.jar;D:\dev\manvenrepos\org\jodd\jodd-core\3.5.2\jodd-core-3.5.2.jar;D:\dev\manvenrepos\org\datanucleus\datanucleus-core\3.2.10\datanucleus-core-3.2.10.jar;D:\dev\manvenrepos\org\apache\thrift\libthrift\0.9.3\libthrift-0.9.3.jar;D:\dev\manvenrepos\org\apache\thrift\libfb303\0.9.3\libfb303-0.9.3.jar;D:\dev\manvenrepos\org\apache\derby\derby\10.12.1.1\derby-10.12.1.1.jar;D:\dev\manvenrepos\org\apache\spark\spark-hive-thriftserver_2.11\2.3.4\spark-hive-thriftserver_2.11-2.3.4.jar;D:\dev\manvenrepos\org\spark-project\hive\hive-cli\1.2.1.spark2\hive-cli-1.2.1.spark2.jar;D:\dev\manvenrepos\jline\jline\2.12\jline-2.12.jar;D:\dev\manvenrepos\org\spark-project\hive\hive-jdbc\1.2.1.spark2\hive-jdbc-1.2.1.spark2.jar;D:\dev\manvenrepos\org\spark-project\hive\hive-beeline\1.2.1.spark2\hive-beeline-1.2.1.spark2.jar;D:\dev\manvenrepos

et\sf\supercsv\super-csv\2.2.0\super-csv-2.2.0.jar;D:\dev\manvenrepos

et\sf\jpam\jpam\1.1\jpam-1.1.jar;D:\dev\manvenrepos\com\pingcap\tikv\tikv-client\2.3.4\tikv-client-2.3.4.jar com.pingcap.tispark.examples.TiDataSourceExampleWithExtensions

tidb01:2379

20/10/19 22:22:42 INFO TiSparkInfo$: Supported Spark Version: 2.3 2.4

Current Spark Version: 2.4.7

Current Spark Major Version: 2.4

20/10/19 22:22:48 INFO TiBatchWrite: startTS: 420255149165641729

== Parsed Logical Plan ==

Relation[o_orderkey#0L,o_custkey#1L,o_orderstatus#2,o_totalprice#3,o_orderdate#4,o_orderpriority#5,o_clerk#6,o_shippriority#7L,o_comment#8] TiDBRelation(TiTableReference(tpch_001,ORDERS,9223372036854775807), TiTimestamp(420255148549341185))

== Analyzed Logical Plan ==

o_orderkey: bigint, o_custkey: bigint, o_orderstatus: string, o_totalprice: decimal(15,2), o_orderdate: date, o_orderpriority: string, o_clerk: string, o_shippriority: bigint, o_comment: string

Relation[o_orderkey#0L,o_custkey#1L,o_orderstatus#2,o_totalprice#3,o_orderdate#4,o_orderpriority#5,o_clerk#6,o_shippriority#7L,o_comment#8] TiDBRelation(TiTableReference(tpch_001,ORDERS,9223372036854775807), TiTimestamp(420255148549341185))

== Optimized Logical Plan ==

InMemoryRelation [o_orderkey#0L, o_custkey#1L, o_orderstatus#2, o_totalprice#3, o_orderdate#4, o_orderpriority#5, o_clerk#6, o_shippriority#7L, o_comment#8], StorageLevel(disk, memory, deserialized, 1 replicas)

± *(1) TiKV CoprocessorRDD{[table: orders] TableScan, Columns: o_orderkey@LONG, o_custkey@LONG, o_orderstatus@VARCHAR(1), o_totalprice@DECIMAL(15, 2), o_orderdate@DATE, o_orderpriority@VARCHAR(15), o_clerk@VARCHAR(15), o_shippriority@LONG, o_comment@VARCHAR(79), KeyRange: [([t\200\000\000\000\000\000\000Q_r\000\000\000\000\000\000\000\000], [t\200\000\000\000\000\000\000Q_s\000\000\000\000\000\000\000\000])], startTs: 420255148549341185}

== Physical Plan ==

InMemoryTableScan [o_orderkey#0L, o_custkey#1L, o_orderstatus#2, o_totalprice#3, o_orderdate#4, o_orderpriority#5, o_clerk#6, o_shippriority#7L, o_comment#8]

± InMemoryRelation [o_orderkey#0L, o_custkey#1L, o_orderstatus#2, o_totalprice#3, o_orderdate#4, o_orderpriority#5, o_clerk#6, o_shippriority#7L, o_comment#8], StorageLevel(disk, memory, deserialized, 1 replicas)

± *(1) TiKV CoprocessorRDD{[table: orders] TableScan, Columns: o_orderkey@LONG, o_custkey@LONG, o_orderstatus@VARCHAR(1), o_totalprice@DECIMAL(15, 2), o_orderdate@DATE, o_orderpriority@VARCHAR(15), o_clerk@VARCHAR(15), o_shippriority@LONG, o_comment@VARCHAR(79), KeyRange: [([t\200\000\000\000\000\000\000Q_r\000\000\000\000\000\000\000\000], [t\200\000\000\000\000\000\000Q_s\000\000\000\000\000\000\000\000])], startTs: 420255148549341185}

20/10/19 22:22:49 INFO TiBatchWriteTable: source data count=15000

20/10/19 22:22:49 ERROR Executor: Exception in task 0.0 in stage 4.0 (TID 4)

java.lang.ClassCastException: com.pingcap.tikv.codec.MyDecimal cannot be cast to java.math.BigDecimal

at com.pingcap.tikv.codec.RowEncoderV2.encodeDecimal(RowEncoderV2.java:320)

at com.pingcap.tikv.codec.RowEncoderV2.encodeValue(RowEncoderV2.java:221)

at com.pingcap.tikv.codec.RowEncoderV2.encodeRowCols(RowEncoderV2.java:166)

at com.pingcap.tikv.codec.RowEncoderV2.encode(RowEncoderV2.java:61)

at com.pingcap.tikv.codec.TableCodecV2.encodeRow(TableCodecV2.java:49)

at com.pingcap.tikv.codec.TableCodec.encodeRow(TableCodec.java:38)

at com.pingcap.tispark.write.TiBatchWriteTable.encodeTiRow(TiBatchWriteTable.scala:593)

at com.pingcap.tispark.write.TiBatchWriteTable.com$pingcap$tispark$write$TiBatchWriteTable$$generateRowKey(TiBatchWriteTable.scala:679)

at com.pingcap.tispark.write.TiBatchWriteTable$$anonfun$generateRecordKV$1.apply(TiBatchWriteTable.scala:687)

at com.pingcap.tispark.write.TiBatchWriteTable$$anonfun$generateRecordKV$1.apply(TiBatchWriteTable.scala:685)

at scala.collection.Iterator$$anon$11.next(Iterator.scala:410)

at scala.collection.Iterator$$anon$11.next(Iterator.scala:410)

at org.apache.spark.storage.memory.MemoryStore.putIterator(MemoryStore.scala:222)

at org.apache.spark.storage.memory.MemoryStore.putIteratorAsValues(MemoryStore.scala:299)

at org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1165)

at org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1156)

at org.apache.spark.storage.BlockManager.doPut(BlockManager.scala:1091)

at org.apache.spark.storage.BlockManager.doPutIterator(BlockManager.scala:1156)

at org.apache.spark.storage.BlockManager.getOrElseUpdate(BlockManager.scala:882)

at org.apache.spark.rdd.RDD.getOrCompute(RDD.scala:357)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:308)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:90)

at org.apache.spark.scheduler.Task.run(Task.scala:123)

at org.apache.spark.executor.Executor$TaskRunner$$anonfun$10.apply(Executor.scala:408)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1360)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:414)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

20/10/19 22:22:49 ERROR TaskSetManager: Task 0 in stage 4.0 failed 1 times; aborting job

Exception in thread “main” org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in stage 4.0 failed 1 times, most recent failure: Lost task 0.0 in stage 4.0 (TID 4, localhost, executor driver): java.lang.ClassCastException: com.pingcap.tikv.codec.MyDecimal cannot be cast to java.math.BigDecimal

at com.pingcap.tikv.codec.RowEncoderV2.encodeDecimal(RowEncoderV2.java:320)

at com.pingcap.tikv.codec.RowEncoderV2.encodeValue(RowEncoderV2.java:221)

at com.pingcap.tikv.codec.RowEncoderV2.encodeRowCols(RowEncoderV2.java:166)

at com.pingcap.tikv.codec.RowEncoderV2.encode(RowEncoderV2.java:61)

at com.pingcap.tikv.codec.TableCodecV2.encodeRow(TableCodecV2.java:49)

at com.pingcap.tikv.codec.TableCodec.encodeRow(TableCodec.java:38)

at com.pingcap.tispark.write.TiBatchWriteTable.encodeTiRow(TiBatchWriteTable.scala:593)

at com.pingcap.tispark.write.TiBatchWriteTable.com$pingcap$tispark$write$TiBatchWriteTable$$generateRowKey(TiBatchWriteTable.scala:679)

at com.pingcap.tispark.write.TiBatchWriteTable$$anonfun$generateRecordKV$1.apply(TiBatchWriteTable.scala:687)

at com.pingcap.tispark.write.TiBatchWriteTable$$anonfun$generateRecordKV$1.apply(TiBatchWriteTable.scala:685)

at scala.collection.Iterator$$anon$11.next(Iterator.scala:410)

at scala.collection.Iterator$$anon$11.next(Iterator.scala:410)

at org.apache.spark.storage.memory.MemoryStore.putIterator(MemoryStore.scala:222)

at org.apache.spark.storage.memory.MemoryStore.putIteratorAsValues(MemoryStore.scala:299)

at org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1165)

at org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1156)

at org.apache.spark.storage.BlockManager.doPut(BlockManager.scala:1091)

at org.apache.spark.storage.BlockManager.doPutIterator(BlockManager.scala:1156)

at org.apache.spark.storage.BlockManager.getOrElseUpdate(BlockManager.scala:882)

at org.apache.spark.rdd.RDD.getOrCompute(RDD.scala:357)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:308)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:90)

at org.apache.spark.scheduler.Task.run(Task.scala:123)

at org.apache.spark.executor.Executor$TaskRunner$$anonfun$10.apply(Executor.scala:408)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1360)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:414)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Driver stacktrace:

at org.apache.spark.scheduler.DAGScheduler.org$apache$spark$scheduler$DAGScheduler$$failJobAndIndependentStages(DAGScheduler.scala:1925)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1913)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1912)

at scala.collection.mutable.ResizableArray$class.foreach(ResizableArray.scala:59)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:48)

at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:1912)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:948)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:948)

at scala.Option.foreach(Option.scala:257)

at org.apache.spark.scheduler.DAGScheduler.handleTaskSetFailed(DAGScheduler.scala:948)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.doOnReceive(DAGScheduler.scala:2146)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2095)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2084)

at org.apache.spark.util.EventLoop$$anon$1.run(EventLoop.scala:49)

at org.apache.spark.scheduler.DAGScheduler.runJob(DAGScheduler.scala:759)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2061)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2082)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2101)

at org.apache.spark.rdd.RDD$$anonfun$take$1.apply(RDD.scala:1409)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:112)

at org.apache.spark.rdd.RDD.withScope(RDD.scala:385)

at org.apache.spark.rdd.RDD.take(RDD.scala:1382)

at com.pingcap.tispark.write.TiBatchWrite.doWrite(TiBatchWrite.scala:203)

at com.pingcap.tispark.write.TiBatchWrite.com$pingcap$tispark$write$TiBatchWrite$$write(TiBatchWrite.scala:87)

at com.pingcap.tispark.write.TiBatchWrite$.write(TiBatchWrite.scala:45)

at com.pingcap.tispark.write.TiDBWriter$.write(TiDBWriter.scala:40)

at com.pingcap.tispark.TiDBDataSource.createRelation(TiDBDataSource.scala:57)

at org.apache.spark.sql.execution.datasources.SaveIntoDataSourceCommand.run(SaveIntoDataSourceCommand.scala:45)

at org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult$lzycompute(commands.scala:70)

at org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult(commands.scala:68)

at org.apache.spark.sql.execution.command.ExecutedCommandExec.doExecute(commands.scala:86)

at org.apache.spark.sql.execution.SparkPlan$$anonfun$execute$1.apply(SparkPlan.scala:131)

at org.apache.spark.sql.execution.SparkPlan$$anonfun$execute$1.apply(SparkPlan.scala:127)

at org.apache.spark.sql.execution.SparkPlan$$anonfun$executeQuery$1.apply(SparkPlan.scala:155)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

at org.apache.spark.sql.execution.SparkPlan.executeQuery(SparkPlan.scala:152)

at org.apache.spark.sql.execution.SparkPlan.execute(SparkPlan.scala:127)

at org.apache.spark.sql.execution.QueryExecution.toRdd$lzycompute(QueryExecution.scala:83)

at org.apache.spark.sql.execution.QueryExecution.toRdd(QueryExecution.scala:81)

at org.apache.spark.sql.DataFrameWriter$$anonfun$runCommand$1.apply(DataFrameWriter.scala:696)

at org.apache.spark.sql.DataFrameWriter$$anonfun$runCommand$1.apply(DataFrameWriter.scala:696)

at org.apache.spark.sql.execution.SQLExecution$$anonfun$withNewExecutionId$1.apply(SQLExecution.scala:80)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:127)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:75)

at org.apache.spark.sql.DataFrameWriter.runCommand(DataFrameWriter.scala:696)

at org.apache.spark.sql.DataFrameWriter.saveToV1Source(DataFrameWriter.scala:305)

at org.apache.spark.sql.DataFrameWriter.save(DataFrameWriter.scala:291)

at com.pingcap.tispark.examples.TiDataSourceExampleWithExtensions$.writeUsingScala(TiDataSourceExampleWithExtensions.scala:103)

at com.pingcap.tispark.examples.TiDataSourceExampleWithExtensions$.main(TiDataSourceExampleWithExtensions.scala:46)

at com.pingcap.tispark.examples.TiDataSourceExampleWithExtensions.main(TiDataSourceExampleWithExtensions.scala)

Caused by: java.lang.ClassCastException: com.pingcap.tikv.codec.MyDecimal cannot be cast to java.math.BigDecimal

at com.pingcap.tikv.codec.RowEncoderV2.encodeDecimal(RowEncoderV2.java:320)

at com.pingcap.tikv.codec.RowEncoderV2.encodeValue(RowEncoderV2.java:221)

at com.pingcap.tikv.codec.RowEncoderV2.encodeRowCols(RowEncoderV2.java:166)

at com.pingcap.tikv.codec.RowEncoderV2.encode(RowEncoderV2.java:61)

at com.pingcap.tikv.codec.TableCodecV2.encodeRow(TableCodecV2.java:49)

at com.pingcap.tikv.codec.TableCodec.encodeRow(TableCodec.java:38)

at com.pingcap.tispark.write.TiBatchWriteTable.encodeTiRow(TiBatchWriteTable.scala:593)

at com.pingcap.tispark.write.TiBatchWriteTable.com$pingcap$tispark$write$TiBatchWriteTable$$generateRowKey(TiBatchWriteTable.scala:679)

at com.pingcap.tispark.write.TiBatchWriteTable$$anonfun$generateRecordKV$1.apply(TiBatchWriteTable.scala:687)

at com.pingcap.tispark.write.TiBatchWriteTable$$anonfun$generateRecordKV$1.apply(TiBatchWriteTable.scala:685)

at scala.collection.Iterator$$anon$11.next(Iterator.scala:410)

at scala.collection.Iterator$$anon$11.next(Iterator.scala:410)

at org.apache.spark.storage.memory.MemoryStore.putIterator(MemoryStore.scala:222)

at org.apache.spark.storage.memory.MemoryStore.putIteratorAsValues(MemoryStore.scala:299)

at org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1165)

at org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1156)

at org.apache.spark.storage.BlockManager.doPut(BlockManager.scala:1091)

at org.apache.spark.storage.BlockManager.doPutIterator(BlockManager.scala:1156)

at org.apache.spark.storage.BlockManager.getOrElseUpdate(BlockManager.scala:882)

at org.apache.spark.rdd.RDD.getOrCompute(RDD.scala:357)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:308)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:90)

at org.apache.spark.scheduler.Task.run(Task.scala:123)

at org.apache.spark.executor.Executor$TaskRunner$$anonfun$10.apply(Executor.scala:408)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1360)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:414)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Process finished with exit code 1