为提高效率,请提供以下信息,问题描述清晰能够更快得到解决:

【 TiDB 使用环境】

otter 消费mysql/mycat binlog 至 TIDB

写多,读少

配置

tidb-server: 32C * 64G * 5

tidb-pd: 32C * 64G * 3

tidb-kv: 32C * 128G * 8

【概述】 场景 + 问题概述

【背景】 做过哪些操作

大量insert、update

【现象】 业务和数据库现象

写入较慢

【问题】 当前遇到的问题

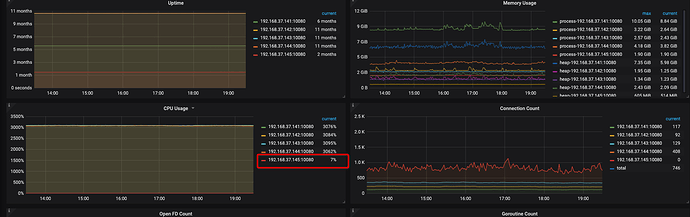

1、cpu利用率高: 长时间CPU利用率接近95%

2、负载不均:五台机器, 4台负载极高, 一台无负载.

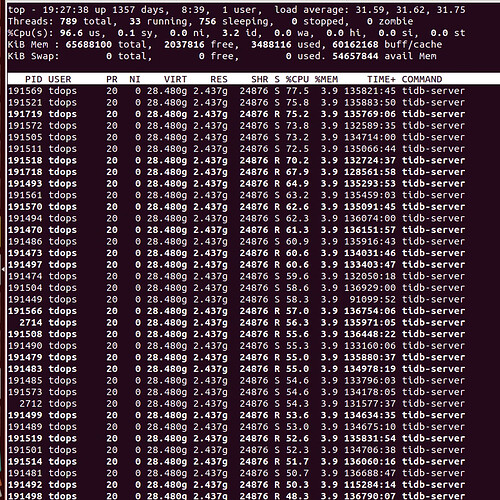

top -H

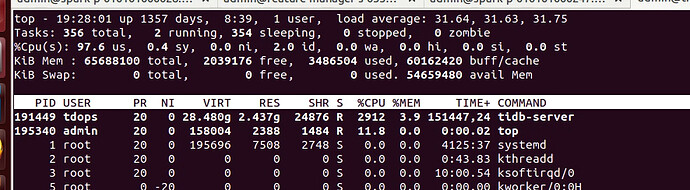

top

CPU

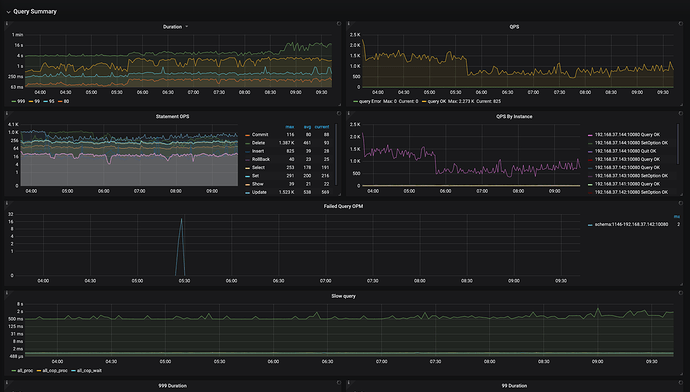

OPS

curl -G http://{TiDBIP}:10080/debug/zip?seconds=30" > profile.zip

profile_20220113.zip (1.6 MB)

curl http://127.0.0.1:10080/debug/zip --output tidb_debug.zip

tidb_debug_20220113.zip (1.6 MB)

【业务影响】

暂无

【TiDB 版本】

V3.0.10

【应用软件及版本】

【附件】 相关日志及配置信息

-

TiDB-Overview Grafana监控

Cluster-Overview_2022-01-13T11_23_37.863Z.json (66.8 KB) -

TiDB Grafana 监控

Cluster-TiDB_2022-01-13T11_23_59.233Z.json (439.7 KB) -

TiKV Grafana 监控

-

PD Grafana 监控

-

对应模块日志(包含问题前后 1 小时日志)

若提问为性能优化、故障排查类问题,请下载脚本运行。终端输出的打印结果,请务必全选并复制粘贴上传。

{‘tidb_log_dir’: ‘{{ deploy_dir }}/log’, ‘dummy’: None, ‘tidb_port’: 4000, ‘tidb_status_port’: 10080, ‘tidb_cert_dir’: ‘{{ deploy_dir }}/conf/ssl’}

TiDB 集群信息

+---------------------+--------------+------+----+------+

| TiDB_version | Clu_replicas | TiDB | PD | TiKV |

+---------------------+--------------+------+----+------+

| 5.7.25-TiDB-v3.0.10 | 3 | 5 | 3 | 22 |

+---------------------+--------------+------+----+------+

集群节点信息

+-------------+-------------+

| Node_IP | Server_info |

+-------------+-------------+

| instance_0 | pd |

| instance_1 | pd |

| instance_2 | tidb |

| instance_3 | tidb |

| instance_4 | tidb |

| instance_5 | tidb |

| instance_6 | tikv+tikv |

| instance_7 | tidb |

| instance_8 | pd |

| instance_9 | tikv+tikv |

| instance_10 | tikv+tikv |

| instance_11 | tikv+tikv |

| instance_12 | tikv+tikv |

| instance_13 | tikv+tikv |

| instance_14 | tikv+tikv |

| instance_15 | tikv+tikv |

| instance_16 | tikv+tikv |

| instance_17 | tikv+tikv |

| instance_18 | tikv+tikv |

+-------------+-------------+

容量 & region 数量

+---------------------+-----------------+--------------+

| Storage_capacity_GB | Storage_uesd_GB | Region_count |

+---------------------+-----------------+--------------+

| 108039.43 | 15925.94 | 597273 |

+---------------------+-----------------+--------------+

QPS

+---------+----------------+-----------------+

| Clu_QPS | Duration_99_MS | Duration_999_MS |

+---------+----------------+-----------------+

| 2966.58 | 890.88 | 97189.89 |

+---------+----------------+-----------------+

热点 region 信息

+----------------+----------+-----------+

| Store | Hot_read | Hot_write |

+----------------+----------+-----------+

| store-4264984 | 1 | 1 |

| store-4264985 | 2 | 1 |

| store-4265539 | 0 | 0 |

| store-21343214 | 1 | 1 |

| store-24 | 0 | 0 |

| store-20 | 2 | 0 |

| store-21 | 0 | 0 |

| store-22 | 0 | 0 |

| store-23 | 0 | 0 |

| store-1 | 2 | 0 |

| store-5 | 0 | 0 |

| store-4 | 2 | 0 |

| store-7 | 2 | 1 |

| store-6 | 0 | 0 |

| store-9 | 2 | 1 |

| store-8 | 2 | 1 |

| store-4265540 | 2 | 0 |

| store-11149091 | 2 | 0 |

| store-8241578 | 2 | 0 |

| store-8241558 | 0 | 0 |

| store-11 | 2 | 1 |

| store-10 | 3 | 1 |

| store-13 | 2 | 0 |

| store-12 | 2 | 0 |

| store-15 | 2 | 1 |

| store-14 | 2 | 0 |

| store-17 | 2 | 0 |

| store-16 | 2 | 1 |

| store-19 | 1 | 1 |

| store-18 | 2 | 1 |

+----------------+----------+-----------+

磁盘延迟信息

+--------+-------------+-------------+--------------+

| Device | Instance | Read_lat_MS | Write_lat_MS |

+--------+-------------+-------------+--------------+

| sda | instance_12 | 0.00 | 10.00 |

| sda | instance_11 | 0.20 | 15.00 |

| sda | instance_10 | nan | nan |

| sda | instance_9 | 0.82 | 6.00 |

| sda | instance_16 | nan | 11.00 |

| sda | instance_15 | 0.12 | 10.33 |

| sda | instance_14 | 5.07 | 19.17 |

| sda | instance_13 | nan | nan |

| sda | instance_18 | nan | 12.83 |

| sda | instance_17 | nan | 15.00 |

| sda | instance_6 | 0.09 | 7.00 |

| sda | instance_7 | nan | 0.00 |

| sda | instance_4 | nan | 0.00 |

| sda | instance_5 | nan | 0.00 |

| sda | instance_2 | nan | 0.00 |

| sda | instance_3 | nan | 0.00 |

| sda | instance_0 | nan | 0.00 |

| sda | instance_1 | nan | 0.00 |

| sda | instance_8 | nan | 0.00 |

| sdb | instance_12 | 0.46 | 2.21 |

| sdb | instance_11 | 0.55 | 1.64 |

| sdb | instance_10 | 0.64 | 1.36 |

| sdb | instance_9 | 0.19 | 2.14 |

| sdb | instance_16 | 0.22 | 2.45 |

| sdb | instance_15 | 0.12 | nan |

| sdb | instance_14 | 0.48 | 2.16 |

| sdb | instance_13 | 0.47 | 2.14 |

| sdb | instance_18 | 0.68 | 1.98 |

| sdb | instance_17 | 0.37 | 2.12 |

| sdb | instance_6 | 0.44 | 2.04 |

| sdb | instance_7 | nan | nan |

| sdb | instance_4 | nan | nan |

| sdb | instance_5 | nan | 0.00 |

| sdb | instance_2 | nan | 0.00 |

| sdb | instance_3 | nan | 0.00 |

| sdb | instance_0 | nan | 0.00 |

| sdb | instance_1 | nan | 0.05 |

| sdb | instance_8 | nan | 0.12 |

| sdc | instance_12 | 0.19 | 4.03 |

| sdc | instance_11 | 0.45 | 3.31 |

| sdc | instance_10 | 0.34 | 2.14 |

| sdc | instance_9 | 0.12 | 1.80 |

| sdc | instance_16 | 0.13 | 3.06 |

| sdc | instance_15 | 0.13 | 0.39 |

| sdc | instance_14 | 0.67 | 2.14 |

| sdc | instance_13 | 0.57 | 1.94 |

| sdc | instance_18 | 0.35 | 2.00 |

| sdc | instance_17 | 0.35 | 1.34 |

| sdc | instance_6 | 0.36 | 1.77 |

| sdc | instance_7 | nan | nan |

| sdc | instance_4 | nan | nan |

| sdc | instance_5 | nan | nan |

| sdc | instance_2 | nan | nan |

| sdc | instance_3 | nan | nan |

| sdc | instance_0 | nan | nan |

| sdc | instance_1 | nan | nan |

| sdc | instance_8 | nan | nan |

+--------+-------------+-------------+--------------+