[500]:“cannot build operator for region with no leader”

你好,像这种报错还有办法处理吗?

- 请问,具体是什么操作的报错?

- 麻烦更加详细描述下,版本等情况,多谢。

版本是3.1.0的

之前是store_id 为4的节点硬盘坏了,换了新的硬盘,又把这个节点加上了。

执行 curl -v -X DELETE http://192.168.241.49:12379/pd/api/v1/store/4?force=true 强制下线

然后再每个kv节点执行 这个./tikv-ctl --db /disk1/tikv/data/db unsafe-recover remove-fail-stores -s 4 --all-regions

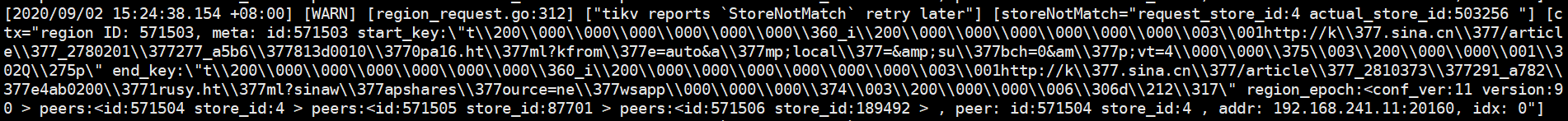

db节点还是报如下错误:

用pd试下把这个节点的region移走

» region store 4

{

“count”: 1,

“regions”: [

{

“id”: 571503,

“start_key”: “7480000000000000FFF05F698000000000FF0000030168747470FF3A2F2F6BFF2E7369FF6E612E636EFF2F61FF727469636C65FF5FFF32373830323031FFFF3237375F61356236FFFF38313364303031FF30FF30706131362EFF6874FF6D6C3F6B66FF726F6DFF653D6175FF746F2661FF6D703BFF6C6F63616CFF3D26FF616D703B7375FF62FF63683D3026616DFFFF703B76743D340000FFFD0380000001C251FFBD70000000000000F9”,

“end_key”: “7480000000000000FFF05F698000000000FF0000030168747470FF3A2F2F6BFF2E7369FF6E612E636EFF2F61FF727469636C65FF5FFF32383130333733FFFF3239315F61373832FFFF65346162303230FF30FF31727573792EFF6874FF6D6C3F7369FF6E6177FF61707368FF61726573FF6F7572FF63653D6E65FF7773FF617070000000FC03FF80000006C6648ACFFF0000000000000000F7”,

“epoch”: {

“conf_ver”: 11,

“version”: 90

},

“peers”: [

{

“id”: 571504,

“store_id”: 4

},

{

“id”: 571505,

“store_id”: 87701

},

{

“id”: 571506,

“store_id”: 189492

}

],

“written_bytes”: 0,

“read_bytes”: 0,

“written_keys”: 0,

“read_keys”: 0,

“approximate_size”: 0,

“approximate_keys”: 0

}

]

}

» operator add remove-peer 571503 4

[500]:“cannot build operator for region with no leader”

如果是 3副本,这个节点硬盘损坏了,缩容这个节点,再重新扩容就好了。

就是先缩容再扩容,但是日志里一直再刷那个报错

- 麻烦反馈下当前pd-ctl 中的 store 和 config show all 信息,多谢。

- 请问您是使用什么部署方式? tiup 还是 ansible ,扩缩容是如何操作的,多谢。

版本3.1.0 ansibale 部署的

[tidb@b16 ~]$ pd-ctl -u http://192.168.241.49:12379

» store

{

“count”: 4,

“stores”: [

{

“store”: {

“id”: 326765,

“address”: “192.168.241.58:20160”,

“version”: “3.1.0”,

“state_name”: “Up”

},

“status”: {

“capacity”: “1.471TiB”,

“available”: “489GiB”,

“used_size”: “1004GiB”,

“leader_count”: 17783,

“leader_weight”: 4,

“leader_score”: 4445.75,

“leader_size”: 1385461,

“region_count”: 43666,

“region_weight”: 8,

“region_score”: 34006197.58264992,

“region_size”: 3448527,

“receiving_snap_count”: 1,

“start_ts”: “2020-08-31T17:17:16+08:00”,

“last_heartbeat_ts”: “2020-09-03T11:02:47.298659938+08:00”,

“uptime”: “65h45m31.298659938s”

}

},

{

“store”: {

“id”: 503256,

“address”: “192.168.241.11:20160”,

“version”: “3.1.0”,

“state_name”: “Up”

},

“status”: {

“capacity”: “1.791TiB”,

“available”: “815.3GiB”,

“used_size”: “997GiB”,

“leader_count”: 13334,

“leader_weight”: 3,

“leader_score”: 4444.666666666667,

“leader_size”: 1084677,

“region_count”: 15650,

“region_weight”: 6,

“region_score”: 210705,

“region_size”: 1264230,

“start_ts”: “2020-09-01T16:27:27+08:00”,

“last_heartbeat_ts”: “2020-09-03T11:02:51.993864908+08:00”,

“uptime”: “42h35m24.993864908s”

}

},

{

“store”: {

“id”: 87701,

“address”: “192.168.241.53:20160”,

“version”: “3.1.0”,

“state_name”: “Up”

},

“status”: {

“capacity”: “1.791TiB”,

“available”: “595GiB”,

“used_size”: “1.197TiB”,

“leader_count”: 17782,

“leader_weight”: 4,

“leader_score”: 4445.5,

“leader_size”: 1396514,

“region_count”: 53125,

“region_weight”: 8,

“region_score”: 34114595.571178645,

“region_size”: 4186345,

“sending_snap_count”: 2,

“start_ts”: “2020-08-31T17:14:55+08:00”,

“last_heartbeat_ts”: “2020-09-03T11:02:52.350811847+08:00”,

“uptime”: “65h47m57.350811847s”

}

},

{

“store”: {

“id”: 189492,

“address”: “192.168.241.56:20160”,

“version”: “3.1.0”,

“state_name”: “Up”

},

“status”: {

“capacity”: “1.791TiB”,

“available”: “638.5GiB”,

“used_size”: “1.156TiB”,

“leader_count”: 13337,

“leader_weight”: 3,

“leader_score”: 4445.666666666667,

“leader_size”: 1052537,

“region_count”: 51221,

“region_weight”: 6,

“region_score”: 31401846.97559206,

“region_size”: 4032724,

“start_ts”: “2020-09-01T16:17:56+08:00”,

“last_heartbeat_ts”: “2020-09-03T11:02:52.188856233+08:00”,

“uptime”: “42h44m56.188856233s”

}

}

]

}

»

» config show all

{

“client-urls”: “http://192.168.241.49:12379”,

“peer-urls”: “http://192.168.241.49:12380”,

“advertise-client-urls”: “http://192.168.241.49:12379”,

“advertise-peer-urls”: “http://192.168.241.49:12380”,

“name”: “pd_b49”,

“data-dir”: “/disk1/pd/data.pd”,

“force-new-cluster”: false,

“enable-grpc-gateway”: true,

“initial-cluster”: “pd_b24=http://192.168.241.24:12380,pd_b26=http://192.168.241.26:12380,pd_b49=http://192.168.241.49:12380”,

“initial-cluster-state”: “new”,

“join”: “”,

“lease”: 3,

“log”: {

“level”: “”,

“format”: “”,

“disable-timestamp”: false,

“file”: {

“filename”: “”,

“max-size”: 0,

“max-days”: 0,

“max-backups”: 0

},

“development”: false,

“disable-caller”: false,

“disable-stacktrace”: false,

“disable-error-verbose”: true,

“sampling”: null

},

“log-file”: “”,

“log-level”: “”,

“tso-save-interval”: “3s”,

“metric”: {

“job”: “pd_b49”,

“address”: “”,

“interval”: “15s”

},

“schedule”: {

“max-snapshot-count”: 16,

“max-pending-peer-count”: 64,

“max-merge-region-size”: 20,

“max-merge-region-keys”: 200000,

“split-merge-interval”: “1h0m0s”,

“enable-one-way-merge”: “false”,

“enable-cross-table-merge”: “false”,

“patrol-region-interval”: “100ms”,

“max-store-down-time”: “30m0s”,

“leader-schedule-limit”: 16,

“leader-schedule-policy”: “count”,

“region-schedule-limit”: 16,

“replica-schedule-limit”: 16,

“merge-schedule-limit”: 16,

“hot-region-schedule-limit”: 4,

“hot-region-cache-hits-threshold”: 3,

“store-balance-rate”: 15,

“tolerant-size-ratio”: 5,

“low-space-ratio”: 0.9,

“high-space-ratio”: 0.6,

“scheduler-max-waiting-operator”: 3,

“enable-remove-down-replica”: “true”,

“enable-replace-offline-replica”: “true”,

“enable-make-up-replica”: “true”,

“enable-remove-extra-replica”: “true”,

“enable-location-replacement”: “true”,

“enable-debug-metrics”: “false”,

“schedulers-v2”: [

{

“type”: “balance-region”,

“args”: null,

“disable”: false,

“args-payload”: “”

},

{

“type”: “balance-leader”,

“args”: null,

“disable”: false,

“args-payload”: “”

},

{

“type”: “hot-region”,

“args”: null,

“disable”: false,

“args-payload”: “”

},

{

“type”: “label”,

“args”: null,

“disable”: false,

“args-payload”: “”

}

],

“schedulers-payload”: {

“balance-hot-region-scheduler”: “null”,

“balance-leader-scheduler”: “{“name”:“balance-leader-scheduler”,“ranges”:[{“start-key”:”",“end-key”:""}]}",

“balance-region-scheduler”: “{“name”:“balance-region-scheduler”,“ranges”:[{“start-key”:”",“end-key”:""}]}",

“evict-leader-scheduler”: “{“store-id-ranges”:{“4”:[{“start-key”:”",“end-key”:""}]}}",

“label-scheduler”: “{“name”:“label-scheduler”,“ranges”:[{“start-key”:”",“end-key”:""}]}"

},

“store-limit-mode”: “manual”

},

“replication”: {

“max-replicas”: 3,

“location-labels”: “”,

“strictly-match-label”: “false”,

“enable-placement-rules”: “false”

},

“pd-server”: {

“use-region-storage”: “true”,

“max-reset-ts-gap”: 86400000000000,

“key-type”: “table”,

“runtime-services”: “”,

“metric-storage”: “”

},

“cluster-version”: “3.1.0”,

“quota-backend-bytes”: “8GiB”,

“auto-compaction-mode”: “periodic”,

“auto-compaction-retention-v2”: “1h”,

“TickInterval”: “500ms”,

“ElectionInterval”: “3s”,

“PreVote”: true,

“security”: {

“cacert-path”: “”,

“cert-path”: “”,

“key-path”: “”,

“cert-allowed-cn”: null

},

“label-property”: {},

“WarningMsgs”: null,

“DisableStrictReconfigCheck”: false,

“HeartbeatStreamBindInterval”: “1m0s”,

“LeaderPriorityCheckInterval”: “1m0s”,

“EnableDynamicConfig”: false,

“EnableDashboard”: false

}

»

- 麻烦停机然后用tikv-ctl查一下那个region在不同tikv上存的meta信息,从报错看这个region 571503 在 3个 store 上 ,store 4 已经没有了,可以忽略,其他两个 store ,挨个重启获取信息,不要同时关闭两个,等另一个启动成功了,再关闭另一个。

- 关闭时,使用命令 tikv-ctl --db /path/to/tikv/db raft region -r 571503 获取信息

https://docs.pingcap.com/zh/tidb/stable/tikv-control#通用参数

- 如果信息中还是有 store 4, 使用你上面的方法再清理一次,可能时之前漏掉了某个host

好的,谢谢

应该的,有问题请继续跟进,多谢。

你好,我也遇到了leader 丢失的问题,我从上面的流程没看到相应的解决方案,请问能说明下吗