您好,我关闭了server-mode后,重新启动了importer和lightning,此时log输出分别为:

importer.log:

[2020/03/29 05:53:16.187 +00:00] [INFO] [setup.rs:75] [“start prometheus client”]

[2020/03/29 05:53:16.188 +00:00] [INFO] [tikv-importer.rs:41] [“Welcome to TiKV Importer.”]

[2020/03/29 05:53:16.188 +00:00] [INFO] [tikv-importer.rs:43] []

[2020/03/29 05:53:16.188 +00:00] [INFO] [tikv-importer.rs:43] [“Release Version: 3.1.0-beta.1”]

[2020/03/29 05:53:16.188 +00:00] [INFO] [tikv-importer.rs:43] [“Git Commit Hash: 5e7a7568362063184003634916145992160338f1”]

[2020/03/29 05:53:16.188 +00:00] [INFO] [tikv-importer.rs:43] [“Git Commit Branch: release-3.1”]

[2020/03/29 05:53:16.188 +00:00] [INFO] [tikv-importer.rs:43] [“UTC Build Time: 2020-01-17 01:01:08”]

[2020/03/29 05:53:16.188 +00:00] [INFO] [tikv-importer.rs:43] [“Rust Version: rustc 1.42.0-nightly (0de96d37f 2019-12-19)”]

[2020/03/29 05:53:16.188 +00:00] [INFO] [tikv-importer.rs:45] []

[2020/03/29 05:53:16.204 +00:00] [INFO] [tikv-importer.rs:160] [“import server started”]

[2020/03/29 05:53:20.805 +00:00] [INFO] [kv_importer.rs:63] [“open engine”] [engine=“EngineFile { uuid: Uuid { bytes: [204, 128, 138, 159, 220, 253, 82, 137, 159, 226, 252, 80, 28, 57, 65, 169] }, path: EnginePath { save: “/home/daslab/importer/data.import/cc808a9f-dcfd-5289-9fe2-fc501c3941a9”, temp: “/home/daslab/importer/data.import/.temp/cc808a9f-dcfd-5289-9fe2-fc501c3941a9” } }”]

[2020/03/29 05:53:20.806 +00:00] [ERROR] [kv_importer.rs:68] [“open engine failed”] [err=“File “/home/daslab/importer/data.import/41c24811-f498-5f61-83ed-ceacb685ed01” exists”] [uuid=41c24811-f498-5f61-83ed-ceacb685ed01]

[2020/03/29 05:53:20.825 +00:00] [WARN] [kv_service.rs:170] [“send rpc response”] [err=RemoteStopped]

[2020/03/29 05:53:20.825 +00:00] [WARN] [kv_service.rs:170] [“send rpc response”] [err=RemoteStopped]

[2020/03/29 05:53:20.825 +00:00] [WARN] [kv_service.rs:170] [“send rpc response”] [err=RemoteStopped]

lightning.log:

[2020/03/29 13:53:20.730 +08:00] [INFO] [version.go:48] [“Welcome to lightning”] [“Release Version”=v3.1.0-beta.1] [“Git Commit Hash”=605760d1b2025d1e1a8b7d0c668c74863d7d1271] [“Git Branch”=HEAD] [“UTC Build Time”=“2020-01-10 12:16:24”] [“Go Version”=“go version go1.12 linux/amd64”]

[2020/03/29 13:53:20.730 +08:00] [INFO] [lightning.go:165] [cfg] [cfg="{“id”:1585461200730201032,“lightning”:{“table-concurrency”:6,“index-concurrency”:2,“region-concurrency”:32,“io-concurrency”:5,“check-requirements”:true},“tidb”:{“host”:“10.12.5.233”,“port”:4000,“user”:“root”,“status-port”:10080,“pd-addr”:“10.12.5.234:2379”,“sql-mode”:“ONLY_FULL_GROUP_BY,STRICT_TRANS_TABLES,NO_ZERO_IN_DATE,NO_ZERO_DATE,ERROR_FOR_DIVISION_BY_ZERO,NO_AUTO_CREATE_USER,NO_ENGINE_SUBSTITUTION”,“max-allowed-packet”:67108864,“distsql-scan-concurrency”:100,“build-stats-concurrency”:20,“index-serial-scan-concurrency”:20,“checksum-table-concurrency”:16},“checkpoint”:{“enable”:true,“schema”:“tidb_lightning_checkpoint”,“driver”:“file”,“keep-after-success”:false},“mydumper”:{“read-block-size”:65536,“batch-size”:107374182400,“batch-import-ratio”:0,“data-source-dir”:"/home/daslab/mydumper",“no-schema”:false,“character-set”:“auto”,“csv”:{“separator”:",",“delimiter”:"\"",“header”:true,“trim-last-separator”:false,“not-null”:false,“null”:"\\N",“backslash-escape”:true},“case-sensitive”:false},“black-white-list”:{“do-tables”:null,“do-dbs”:null,“ignore-tables”:null,“ignore-dbs”:[“mysql”,“information_schema”,“performance_schema”,“sys”]},“tikv-importer”:{“addr”:“10.12.5.112:8287”,“backend”:“importer”,“on-duplicate”:“replace”},“post-restore”:{“level-1-compact”:false,“compact”:false,“checksum”:true,“analyze”:true},“cron”:{“switch-mode”:“5m0s”,“log-progress”:“5m0s”},“routes”:null}"]

[2020/03/29 13:53:20.730 +08:00] [INFO] [lightning.go:194] [“load data source start”]

[2020/03/29 13:53:20.739 +08:00] [INFO] [lightning.go:197] [“load data source completed”] [takeTime=8.854188ms] []

[2020/03/29 13:53:20.745 +08:00] [INFO] [restore.go:245] [“the whole procedure start”]

[2020/03/29 13:53:20.751 +08:00] [INFO] [restore.go:283] [“restore table schema start”] [db=Block_Base]

[2020/03/29 13:53:20.752 +08:00] [INFO] [tidb.go:99] [“create tables start”] [db=Block_Base]

[2020/03/29 13:53:20.753 +08:00] [INFO] [tidb.go:117] [“create tables completed”] [db=Block_Base] [takeTime=1.134321ms] []

[2020/03/29 13:53:20.753 +08:00] [INFO] [restore.go:291] [“restore table schema completed”] [db=Block_Base] [takeTime=2.211599ms] []

[2020/03/29 13:53:20.756 +08:00] [INFO] [restore.go:545] [“restore all tables data start”]

[2020/03/29 13:53:20.756 +08:00] [INFO] [restore.go:566] [“restore table start”] [table=Block_Base.block_info]

[2020/03/29 13:53:20.756 +08:00] [INFO] [restore.go:683] [“reusing engines and files info from checkpoint”] [table=Block_Base.block_info] [enginesCnt=2] [filesCnt=570]

[2020/03/29 13:53:20.782 +08:00] [INFO] [backend.go:187] [“open engine”] [engineTag=Block_Base.block_info:-1] [engineUUID=cc808a9f-dcfd-5289-9fe2-fc501c3941a9]

[2020/03/29 13:53:20.782 +08:00] [INFO] [restore.go:747] [“import whole table start”] [table=Block_Base.block_info]

[2020/03/29 13:53:20.782 +08:00] [INFO] [restore.go:776] [“restore engine start”] [table=Block_Base.block_info] [engineNumber=0]

[2020/03/29 13:53:20.782 +08:00] [INFO] [restore.go:853] [“encode kv data and write start”] [table=Block_Base.block_info] [engineNumber=0]

[2020/03/29 13:53:20.783 +08:00] [INFO] [backend.go:187] [“open engine”] [engineTag=Block_Base.block_info:0] [engineUUID=41c24811-f498-5f61-83ed-ceacb685ed01]

[2020/03/29 13:53:20.785 +08:00] [INFO] [restore.go:1776] [“restore file start”] [table=Block_Base.block_info] [engineNumber=0] [fileIndex=13] [path=/home/daslab/mydumper/block_base/Block_Base.block_info.000000015.sql:0]

[2020/03/29 13:53:20.795 +08:00] [INFO] [restore.go:1776] [“restore file start”] [table=Block_Base.block_info] [engineNumber=0] [fileIndex=27] [path=/home/daslab/mydumper/block_base/Block_Base.block_info.000000029.sql:0]

[2020/03/29 13:53:20.803 +08:00] [ERROR] [restore.go:1602] [“write to data engine failed”] [table=Block_Base.block_info] [engineNumber=0] [fileIndex=7] [path=/home/daslab/mydumper/block_base/Block_Base.block_info.000000009.sql:0] [task=deliver] [error=“rpc error: code = Canceled desc = Cancelled”]

[2020/03/29 13:53:20.804 +08:00] [ERROR] [restore.go:1602] [“write to data engine failed”] [table=Block_Base.block_info] [engineNumber=0] [fileIndex=13] [path=/home/daslab/mydumper/block_base/Block_Base.block_info.000000015.sql:0] [task=deliver] [error=“rpc error: code = Canceled desc = Cancelled”]

[2020/03/29 13:53:20.804 +08:00] [ERROR] [restore.go:1602] [“write to data engine failed”] [table=Block_Base.block_info] [engineNumber=0] [fileIndex=10] [path=/home/daslab/mydumper/block_base/Block_Base.block_info.000000012.sql:0] [task=deliver] [error=“rpc error: code = Canceled desc = Cancelled”]

[2020/03/29 13:53:20.846 +08:00] [ERROR] [restore.go:1602] [“write to data engine failed”] [table=Block_Base.block_info] [engineNumber=0] [fileIndex=30] [path=/home/daslab/mydumper/block_base/Block_Base.block_info.000000032.sql:0] [task=deliver] [error=“rpc error: code = Canceled desc = Cancelled”]

[2020/03/29 13:53:20.909 +08:00] [ERROR] [restore.go:1602] [“write to data engine failed”] [table=Block_Base.block_info] [engineNumber=0] [fileIndex=21] [path=/home/daslab/mydumper/block_base/Block_Base.block_info.000000023.sql:0] [task=deliver] [error=“rpc error: code = Canceled desc = Cancelled”]

[2020/03/29 13:53:20.953 +08:00] [INFO] [restore.go:256] [“user terminated”] [step=2] [error=“rpc error: code = Canceled desc = Cancelled”]

[2020/03/29 13:53:20.953 +08:00] [INFO] [restore.go:266] [“the whole procedure completed”] [takeTime=207.864072ms] []

[2020/03/29 13:53:20.953 +08:00] [INFO] [restore.go:442] [“everything imported, stopping periodic actions”]

[2020/03/29 13:53:20.953 +08:00] [INFO] [main.go:61] [“tidb lightning exit”]

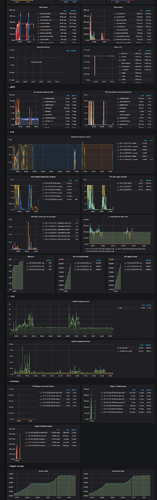

另外,附件为lightning和importer的全部log文件信息。

上传中:tidb_lightning.log…