- 【TiDB 版本】:v3.0.5

- 【问题描述】:使用tcpcopy引流线上流量压测tidb集群,出现一些慢查询,但是该慢SQL手动执行没有性能问题。

查看慢日志内容如下:

SQL1

# Time: 2020-01-10T11:14:45.573011107+08:00

# Txn_start_ts: 413827355604156418

# User: imrelation_bs_w@10.xx.xx.xx

# Conn_ID: 5049872

# Query_time: 3.707086752

# Parse_time: 0.000140625

# Compile_time: 0.000626766

# Backoff_time: 3.674 Request_count: 2 Total_keys: 11 Process_keys: 9 Prewrite_time: 0.013738701 Commit_time: 0.001622127 Get_commit_ts_time: 0.000437774 Resolve_lock_time: 0.021305638 Write_keys: 15 Write_size: 2190 Prewrite_region: 3

# DB: im_relation

# Index_names: [im_b_c_relation_98:from_to]

# Is_internal: false

# Digest: 4c956cc9c776d3012ac14a1e8ed6a6d4e214c03762a65dfcee9685a44ab5c94d

# Stats: im_b_c_relation_98:413827341042057272

# Num_cop_tasks: 2

# Cop_proc_avg: 0 Cop_proc_p90: 0 Cop_proc_max: 0 Cop_proc_addr: 10.16.82.6:20171

# Cop_wait_avg: 0 Cop_wait_p90: 0 Cop_wait_max: 0 Cop_wait_addr: 10.16.82.6:20171

# Mem_max: 19532

# Prepared: false

# Has_more_results: false

# Succ: true

# Plan: tidb_decode_plan('xwHwRjAJMzFfOQkwCTAJCjEJMTNfNwkxCTAJdGFibGU6aW1fYl9jX3JlbGF0aW9uXzk4LCBpbmRleDpmcm9tX3VzZXJfaWQsIHRvGQxkcmFuZ2U6Wy0zOTcwMzI5NyA2MTUxMTU3MSxGEwBIXSwga2VlcCBvcmRlcjpmYWxzZQGFCDBfOHqFAEI2AA==')

UPDATE im_b_c_relation_98 SET `from_user_id`='-39703297', `to_user_id`='61511571', `is_reply`='0', `from_first_time`='1577244878', `from_last_time`='1578626081', `to_first_time`='0', `to_last_time`='0', `last_user`='1', `last_time`='1578626081', `last_msg`='{\"extra\":{\"novisible\":\"b\",\"recall\":\"c\",\"post_id\":\"10671296\"},\"content\":\"您好,打扰您一下\"}', `last_msg_type`='RC:TxtMsg', `sort`='0001578626081', `noread_num`='0', `update_at`='1578626081' WHERE `from_user_id`='-39703297' && `to_user_id`='61511571';

SQL2:

# Time: 2020-01-10T11:15:16.130062529+08:00

# Txn_start_ts: 413827363376726057

# User: imrelation_bs_w@10.xx.xx.xx

# Conn_ID: 5049872

# Query_time: 4.585123578

# Parse_time: 0.000140222

# Compile_time: 0.000531084

# Wait_time: 0.001 Request_count: 2 Total_keys: 8 Process_keys: 6 Prewrite_time: 4.580365244 Commit_time: 0.001678787 Get_commit_ts_time: 0.000210794 Commit_backoff_time: 8.235 Backoff_types: [txnLock txnLock txnLock txnLock txnLock txnLock txnLock txnLock txnLock txnLock] Resolve_lock_time: 0.01575911 Write_keys: 15 Write_size: 3548 Prewrite_region: 3

# DB: im_relation

# Index_names: [im_b_c_relation_41:from_to]

# Is_internal: false

# Digest: 9398b355b282d35c1344af1497428d876845b15fed86e34447eaf4f4c7a8e0e4

# Stats: im_b_c_relation_41:413827295953289218

# Num_cop_tasks: 2

# Cop_proc_avg: 0 Cop_proc_p90: 0 Cop_proc_max: 0 Cop_proc_addr: 10.16.82.7:20172

# Cop_wait_avg: 0.0005 Cop_wait_p90: 0.001 Cop_wait_max: 0.001 Cop_wait_addr: 10.16.82.7:20172

# Mem_max: 18764

# Prepared: false

# Has_more_results: false

# Succ: true

# Plan: tidb_decode_plan('xwHwRjAJMzFfOQkwCTAJCjEJMTNfNwkxCTAJdGFibGU6aW1fYl9jX3JlbGF0aW9uXzQxLCBpbmRleDpmcm9tX3VzZXJfaWQsIHRvGQxkcmFuZ2U6Wy01Nzc0MDY0MCA2MjU3NTMxNSxGEwBIXSwga2VlcCBvcmRlcjpmYWxzZQGFEDBfOAkxcoUAQjYA')

UPDATE im_b_c_relation_41 SET `from_user_id`='-57740640', `to_user_id`='62575315', `is_reply`='0', `from_first_time`='1576645278', `from_last_time`='1578626111', `to_first_time`='0', `to_last_time`='0', `last_user`='1', `last_time`='1578626111', `last_msg`='{\"job_id\":\"10627168\",\"company_name\":\"上海忻美芃邦信息科技有限公司\",\"job_title\":\"英语老师\",\"job_date_type_desc\":\"全职\",\"job_desc\":\"\",\"job_location_quanguo\":\"\",\"job_locations\":[{\"address\":\"财富时代大厦\",\"lat_lng\":\"31.24936,121.448738\"}],\"tags\":[\"全职\",\"英语老师\"],\"demand_tags\":[\"男女不限\",\"20-35岁\",\"学历不限\",\"1-3年\",\"身份不限\"],\"salary_desc\":\"10.0k-15.0k元/月\",\"job_type_desc\":\"英语老师\",\"payment_type_desc\":\"月结\",\"template_type\":0,\"extra\":{\"novisible\":\"b\",\"recall\":\"c\",\"post_id\":\"10627168\"},\"message_card_name\":\"职位邀请\"}', `last_msg_type`='doumi:RecruiterInviteYouMsg', `sort`='0001578626111', `noread_num`='0', `update_at`='1578626111' WHERE `from_user_id`='-57740640' && `to_user_id`='62575315';

慢SQL大部分是因为Backoff_time或Prewrite_time耗时造成的。

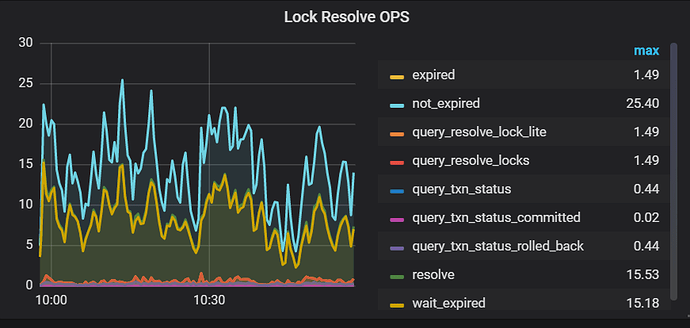

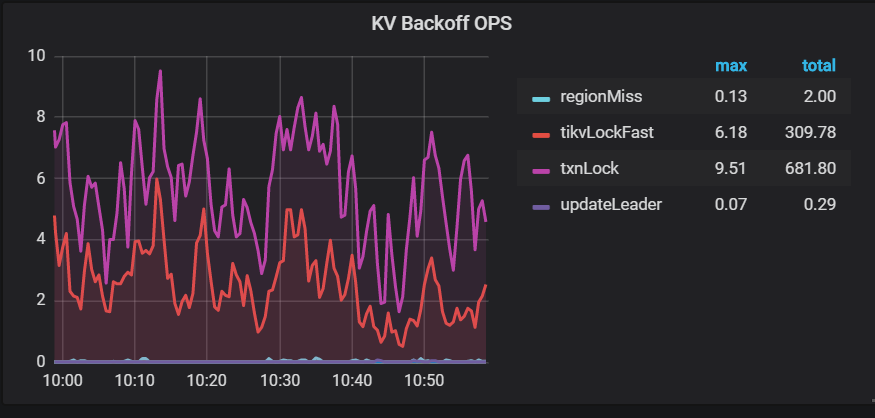

附上有关锁冲突的监控