【TiDB 使用环境】生产环境

【TiDB 版本】7.5.5

【操作系统】debian

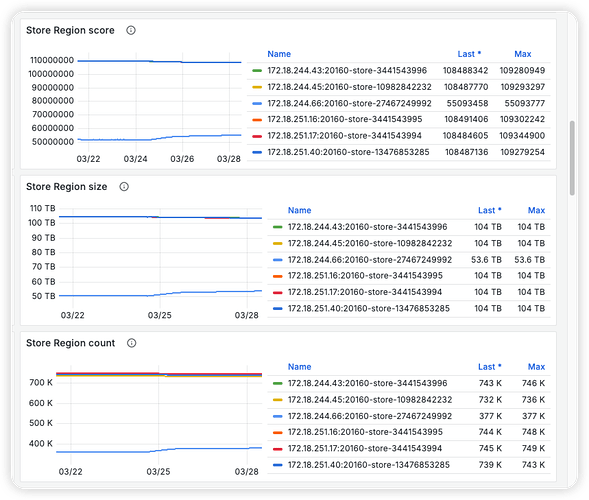

【集群数据量】我们根据冷热数据存放不同性能存储盘的节点上,其中冷数据(离线统计用)部分现在有 5+1 个 sata 盘节点(新增了 1 个),每个有约 10TB 数据

【遇到的问题:问题现象及影响】新追加的节点很慢,参照各种知识库文档调整过 scheduler config 和 store limit 配置:调过很大,也调过很小

目前是这样的配置:

{

"replication": {

"enable-placement-rules": "true",

"enable-placement-rules-cache": "false",

"isolation-level": "host",

"location-labels": "zone,dc,host,disk",

"max-replicas": 3,

"strictly-match-label": "false"

},

"schedule": {

"enable-cross-table-merge": "true",

"enable-diagnostic": "false",

"enable-joint-consensus": "true",

"enable-tikv-split-region": "true",

"enable-witness": "false",

"high-space-ratio": 0.7,

"hot-region-cache-hits-threshold": 3,

"hot-region-schedule-limit": 4,

"hot-regions-reserved-days": 7,

"hot-regions-write-interval": "10m0s",

"leader-schedule-limit": 500,

"leader-schedule-policy": "count",

"low-space-ratio": 0.98,

"max-merge-region-keys": 500000,

"max-merge-region-size": 50,

"max-movable-hot-peer-size": 512,

"max-pending-peer-count": 200,

"max-snapshot-count": 200,

"max-store-down-time": "30m0s",

"max-store-preparing-time": "48h0m0s",

"merge-schedule-limit": 500,

"patrol-region-interval": "10ms",

"region-schedule-limit": 500,

"region-score-formula-version": "v2",

"replica-schedule-limit": 500,

"slow-store-evicting-affected-store-ratio-threshold": 0.3,

"split-merge-interval": "1h0m0s",

"store-limit-version": "v2",

"switch-witness-interval": "1h0m0s",

"tolerant-size-ratio": 0,

"witness-schedule-limit": 64

}

store limit 目前几个节点都是调整成 add-peer : 1000, remove-peer 50

【复制黏贴 ERROR 报错的日志】

【其他附件:截图/日志/监控】

确认目前的 score 是不一样的,就是均衡数据的速率非常慢

参考过这篇排错文档去定位问题:专栏 - TiDB:TiKV 副本搬迁原理及常见问题 | TiDB 社区

核实过确实新加节点的 region_worker cpu 一直处于繁忙状态,但无法提升

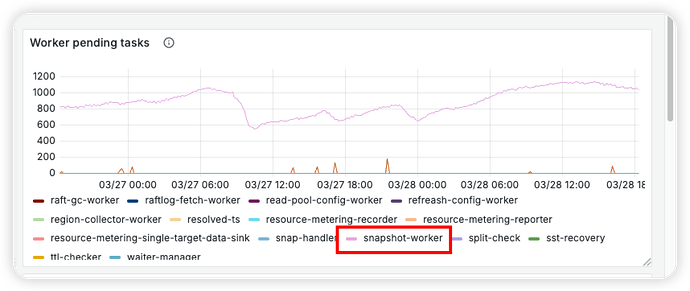

worker pending tasks 可以看到新节点卡在 apply snapshot 这一步,跟上面 region_worker cpu 繁忙是吻合的

补一个 pd operator 的截图