【 TiDB 使用环境】生产环境 /测试/ Poc

【 TiDB 版本】

【复现路径】做过哪些操作出现的问题

【遇到的问题:问题现象及影响】

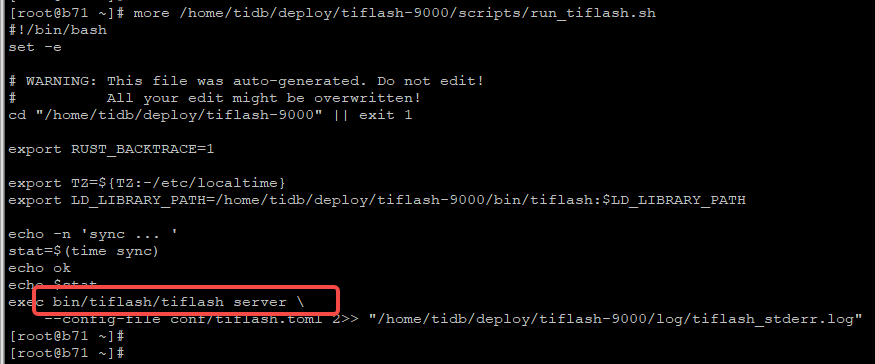

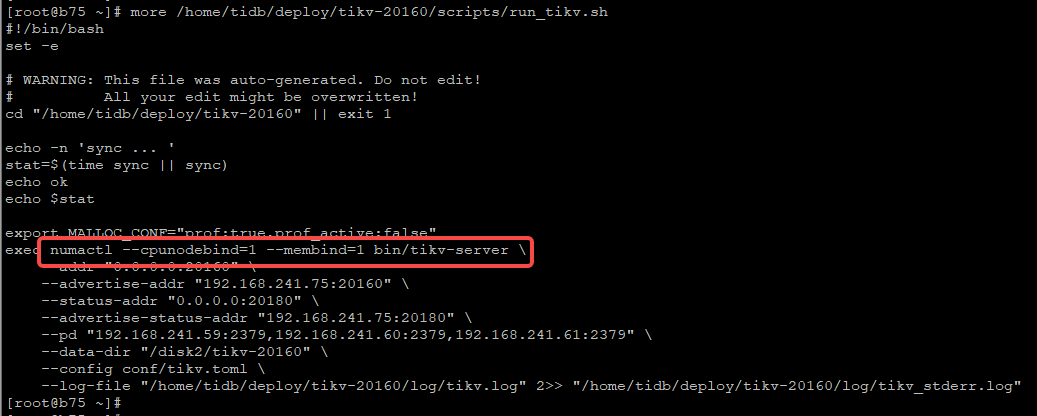

刚刚扩容(新加)了2个节点 tiflash。 发现numa_node 绑核没有成功,同样的配置同一批机器,tikv节点是成功了的。日志也没过滤到有用信息,不知道怎么排查问题大佬们帮忙看看~~

【资源配置】

【附件:截图/日志/监控】

$ tiup cluster show-config tidb-xxxxxxx

tiup is checking updates for component cluster ...

Starting component `cluster`: /home/tidb/.tiup/components/cluster/v1.12.1/tiup-cluster show-config tidb-xxxxxxx

global:

user: tidb

ssh_port: 17717

ssh_type: builtin

deploy_dir: /home/tidb/deploy

data_dir: /disk2

os: linux

monitored:

node_exporter_port: 9100

blackbox_exporter_port: 9115

deploy_dir: /home/tidb/deploy/monitor-9100

data_dir: /disk2/monitor-9100

log_dir: /home/tidb/deploy/monitor-9100/log

server_configs:

tidb:

binlog.enable: false

binlog.ignore-error: false

log.slow-threshold: 3000

mem-quota-query: 3221225472

proxy-protocol.networks: 192.168.241.54,192.168.241.55,192.168.241.101,192.168.241.100

tikv:

readpool.coprocessor.use-unified-pool: true

readpool.storage.use-unified-pool: false

rocksdb.defaultcf.block-cache-size: 32GB

rocksdb.lockcf.block-cache-size: 2.56GB

rocksdb.writecf.block-cache-size: 19.2GB

pd:

replication.enable-placement-rules: true

schedule.leader-schedule-limit: 4

schedule.region-schedule-limit: 2048

schedule.replica-schedule-limit: 64

tidb_dashboard: {}

tiflash: {}

tiflash-learner: {}

pump: {}

drainer: {}

cdc: {}

kvcdc: {}

grafana: {}

tikv_servers:

- host: 192.168.241.73

ssh_port: 17717

port: 20160

status_port: 20180

deploy_dir: /home/tidb/deploy/tikv-20160

data_dir: /disk2/tikv-20160

log_dir: /home/tidb/deploy/tikv-20160/log

numa_node: "1"

arch: amd64

os: linux

- host: 192.168.241.74

ssh_port: 17717

port: 20160

status_port: 20180

deploy_dir: /home/tidb/deploy/tikv-20160

data_dir: /disk2/tikv-20160

log_dir: /home/tidb/deploy/tikv-20160/log

numa_node: "1"

arch: amd64

os: linux

- host: 192.168.241.75

ssh_port: 17717

port: 20160

status_port: 20180

deploy_dir: /home/tidb/deploy/tikv-20160

data_dir: /disk2/tikv-20160

log_dir: /home/tidb/deploy/tikv-20160/log

numa_node: "1"

arch: amd64

os: linux

- host: 192.168.241.76

ssh_port: 17717

port: 20160

status_port: 20180

deploy_dir: /home/tidb/deploy/tikv-20160

data_dir: /disk2/tikv-20160

log_dir: /home/tidb/deploy/tikv-20160/log

numa_node: "1"

arch: amd64

os: linux

tiflash_servers:

- host: 192.168.241.71

ssh_port: 17717

tcp_port: 9000

http_port: 8123

flash_service_port: 3930

flash_proxy_port: 20170

flash_proxy_status_port: 20292

metrics_port: 8234

deploy_dir: /home/tidb/deploy/tiflash-9000

data_dir: /disk2/tiflash-9000

log_dir: /home/tidb/deploy/tiflash-9000/log

numa_node: "1"

arch: amd64

os: linux

- host: 192.168.241.72

ssh_port: 17717

tcp_port: 9000

http_port: 8123

flash_service_port: 3930

flash_proxy_port: 20170

flash_proxy_status_port: 20292

metrics_port: 8234

deploy_dir: /home/tidb/deploy/tiflash-9000

data_dir: /disk2/tiflash-9000

log_dir: /home/tidb/deploy/tiflash-9000/log

numa_node: "1"

arch: amd64

os: linux