用flink往tidb中写入数据。做了一个只有两个字段的表写入。速度还不到100条每秒。

为提高效率,请提供以下信息,问题描述清晰能够更快得到解决:

【 TiDB 使用环境】

V5.3.0版本TIDB

所有机器都是16C64G1Tssd

3PD+TIDB

9TIKV

3TIFLASH

【概述】 场景 + 问题概述

我们在用flink消费kafka消息往Tidb中写入数据的时候,开始发现消费速度特别慢,每秒只有7条左右,经过反复测试定位,最后发现是tidb写入很慢,每秒不足100条

【背景】 做过哪些操作

以下是tiup cluster edit-config的设置

global:

user: root

ssh_port: 22

ssh_type: builtin

deploy_dir: /data/tidb-deploy

data_dir: /data/tidb-data

os: linux

monitored:

node_exporter_port: 9100

blackbox_exporter_port: 9115

deploy_dir: /data/tidb-deploy/monitored-9100

data_dir: /data/tidb-data/monitored-9100

log_dir: /data/tidb-deploy/monitored-9100/log

server_configs:

tidb:

binlog.enable: false

binlog.ignore-error: false

log.level: error

log.slow-threshold: 300

mem-quota-query: 51539607552

performance.txn-total-size-limit: 1073741824

tikv:

raftdb.defaultcf.force-consistency-checks: false

raftstore.apply-max-batch-size: 256

raftstore.apply-pool-size: 10

raftstore.hibernate-regions: true

raftstore.messages-per-tick: 1024

raftstore.perf-level: 5

raftstore.raft-max-inflight-msgs: 2048

raftstore.store-io-pool-size: 2

raftstore.store-max-batch-size: 256

raftstore.store-pool-siz: 16

raftstore.store-pool-size: 8

raftstore.sync-log: false

readpool.coprocessor.use-unified-pool: true

readpool.storage.use-unified-pool: true

readpool.unified.max-thread-count: 12

rocksdb.defaultcf.force-consistency-checks: false

rocksdb.lockcf.force-consistency-checks: false

rocksdb.raftcf.force-consistency-checks: false

rocksdb.writecf.force-consistency-checks: false

server.grpc-concurrency: 16

storage.block-cache.capacity: 148G

storage.scheduler-worker-pool-size: 64

pd:

replication.enable-placement-rules: true

schedule.leader-schedule-limit: 4

schedule.region-schedule-limit: 2048

schedule.replica-schedule-limit: 64

tiflash:

profiles.default.max_memory_usage: 0

profiles.default.max_memory_usage_for_all_queries: 0

tiflash-learner: {}

pump: {}

drainer: {}

cdc: {}

【现象】 业务和数据库现象

tidb写入很慢

【问题】 当前遇到的问题

tidb写入很慢

【业务影响】

kafka消息堆积

【TiDB 版本】

V5.3.0

【应用软件及版本】

【附件】 相关日志及配置信息

- TiUP Cluster Display 信息

Starting componentcluster: /root/.tiup/components/cluster/v1.8.2/tiup-cluster display chit-tidb

Cluster type: tidb

Cluster name: chit-tidb

Cluster version: v5.3.0

Deploy user: root

SSH type: builtin

Dashboard URL: http://172.26.9.15:2379/dashboard

ID Role Host Ports OS/Arch Status Data Dir Deploy Dir

172.26.8.11:9093 alertmanager 172.26.8.11 9093/9094 linux/x86_64 Up /data/tidb-data/alertmanager-9093 /data/tidb-deploy/alertmanager-9093

172.26.8.11:3000 grafana 172.26.8.11 3000 linux/x86_64 Up - /data/tidb-deploy/grafana-3000

172.26.10.19:2379 pd 172.26.10.19 2379/2380 linux/x86_64 Up /data/tidb-data/pd-2379 /data/tidb-deploy/pd-2379

172.26.8.11:2379 pd 172.26.8.11 2379/2380 linux/x86_64 Up|L /data/tidb-data/pd-2379 /data/tidb-deploy/pd-2379

172.26.9.15:2379 pd 172.26.9.15 2379/2380 linux/x86_64 Up|UI /data/tidb-data/pd-2379 /data/tidb-deploy/pd-2379

172.26.8.11:9090 prometheus 172.26.8.11 9090 linux/x86_64 Up /data/tidb-data/prometheus-8249 /data/tidb-deploy/prometheus-8249

172.26.10.19:4000 tidb 172.26.10.19 4000/10080 linux/x86_64 Up - /data/tidb-deploy/tidb-4000

172.26.8.11:4000 tidb 172.26.8.11 4000/10080 linux/x86_64 Up - /data/tidb-deploy/tidb-4000

172.26.9.15:4000 tidb 172.26.9.15 4000/10080 linux/x86_64 Up - /data/tidb-deploy/tidb-4000

172.26.10.22:9000 tiflash 172.26.10.22 9000/8123/3930/20170/20292/8234 linux/x86_64 Up /data/tidb-data/tiflash-9000 /data/tidb-deploy/tiflash-9000

172.26.8.14:9000 tiflash 172.26.8.14 9000/8123/3930/20170/20292/8234 linux/x86_64 Up /data/tidb-data/tiflash-9000 /data/tidb-deploy/tiflash-9000

172.26.9.18:9000 tiflash 172.26.9.18 9000/8123/3930/20170/20292/8234 linux/x86_64 Up /data/tidb-data/tiflash-9000 /data/tidb-deploy/tiflash-9000

172.26.10.20:20160 tikv 172.26.10.20 20160/20180 linux/x86_64 Up /data/tidb-data/tikv-20160 /data/tidb-deploy/tikv-20160

172.26.10.21:20160 tikv 172.26.10.21 20160/20180 linux/x86_64 Up /data/tidb-data/tikv-20160 /data/tidb-deploy/tikv-20160

172.26.10.25:20160 tikv 172.26.10.25 20160/20180 linux/x86_64 Up /data/tidb-data/tikv-20160 /data/tidb-deploy/tikv-20160

172.26.8.12:20160 tikv 172.26.8.12 20160/20180 linux/x86_64 Up /data/tidb-data/tikv-20160 /data/tidb-deploy/tikv-20160

172.26.8.13:20160 tikv 172.26.8.13 20160/20180 linux/x86_64 Up /data/tidb-data/tikv-20160 /data/tidb-deploy/tikv-20160

172.26.9.16:20160 tikv 172.26.9.16 20160/20180 linux/x86_64 Up /data/tidb-data/tikv-20160 /data/tidb-deploy/tikv-20160

172.26.9.17:20160 tikv 172.26.9.17 20160/20180 linux/x86_64 Up /data/tidb-data/tikv-20160 /data/tidb-deploy/tikv-20160

172.26.9.23:20160 tikv 172.26.9.23 20160/20180 linux/x86_64 Up /data/tidb-data/tikv-20160 /data/tidb-deploy/tikv-20160

172.26.9.24:20160 tikv 172.26.9.24 20160/20180 linux/x86_64 Up /data/tidb-data/tikv-20160 /data/tidb-deploy/tikv-20160

Total nodes: 21

- TiUP CLuster Edit config 信息

global:

user: root

ssh_port: 22

ssh_type: builtin

deploy_dir: /data/tidb-deploy

data_dir: /data/tidb-data

os: linux

monitored:

node_exporter_port: 9100

blackbox_exporter_port: 9115

deploy_dir: /data/tidb-deploy/monitored-9100

data_dir: /data/tidb-data/monitored-9100

log_dir: /data/tidb-deploy/monitored-9100/log

server_configs:

tidb:

binlog.enable: false

binlog.ignore-error: false

log.level: error

log.slow-threshold: 300

mem-quota-query: 51539607552

performance.txn-total-size-limit: 1073741824

tikv:

raftdb.defaultcf.force-consistency-checks: false

raftstore.apply-max-batch-size: 256

raftstore.apply-pool-size: 10

raftstore.hibernate-regions: true

raftstore.messages-per-tick: 1024

raftstore.perf-level: 5

raftstore.raft-max-inflight-msgs: 2048

raftstore.store-io-pool-size: 2

raftstore.store-max-batch-size: 256

raftstore.store-pool-siz: 16

raftstore.store-pool-size: 8

raftstore.sync-log: false

readpool.coprocessor.use-unified-pool: true

readpool.storage.use-unified-pool: true

readpool.unified.max-thread-count: 12

rocksdb.defaultcf.force-consistency-checks: false

rocksdb.lockcf.force-consistency-checks: false

rocksdb.raftcf.force-consistency-checks: false

rocksdb.writecf.force-consistency-checks: false

server.grpc-concurrency: 16

storage.block-cache.capacity: 148G

storage.scheduler-worker-pool-size: 64

pd:

replication.enable-placement-rules: true

schedule.leader-schedule-limit: 4

schedule.region-schedule-limit: 2048

schedule.replica-schedule-limit: 64

tiflash:

profiles.default.max_memory_usage: 0

profiles.default.max_memory_usage_for_all_queries: 0

tiflash-learner: {}

pump: {}

drainer: {}

cdc: {}

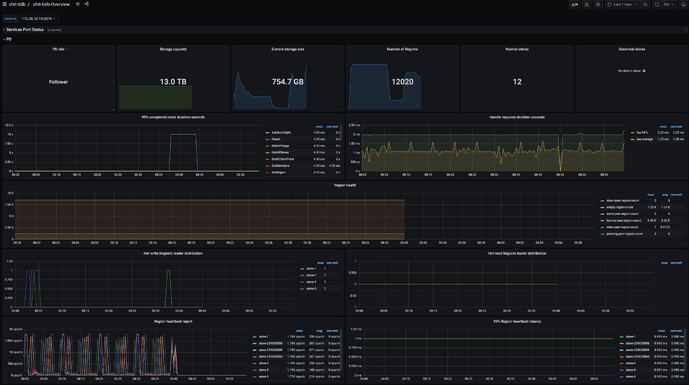

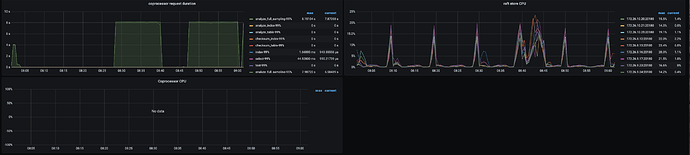

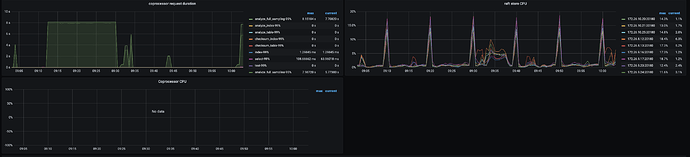

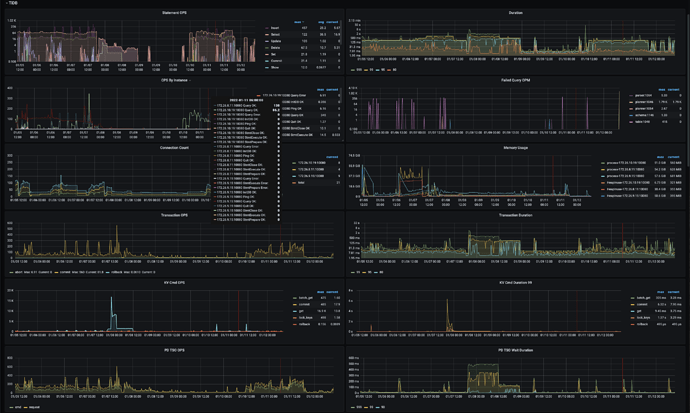

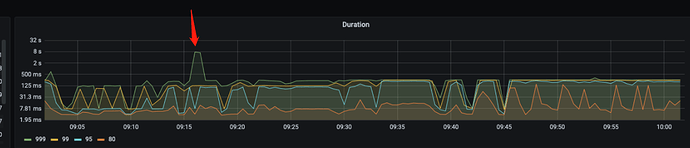

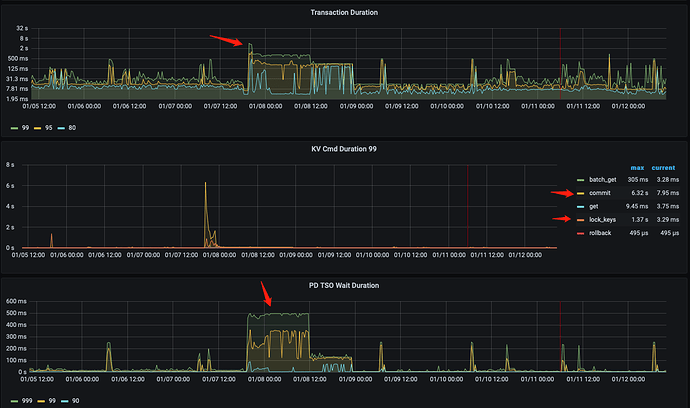

监控(https://metricstool.pingcap.com/) - TiDB-Overview Grafana监控

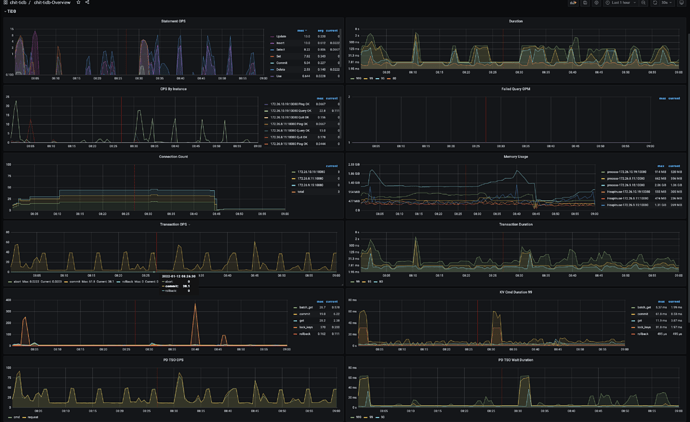

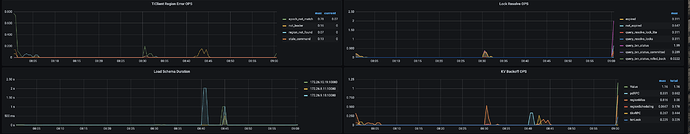

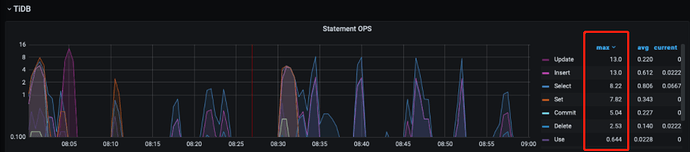

- TiDB Grafana 监控

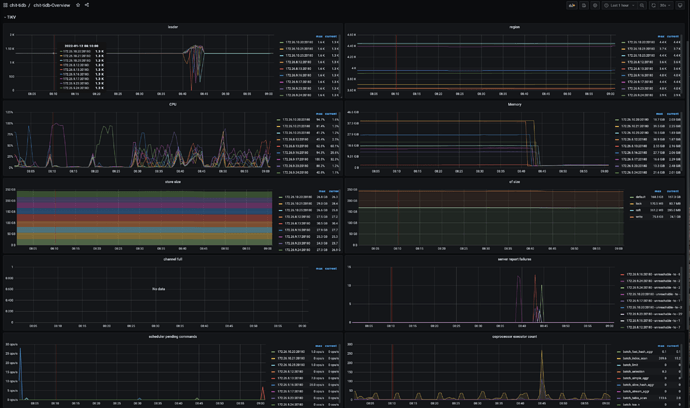

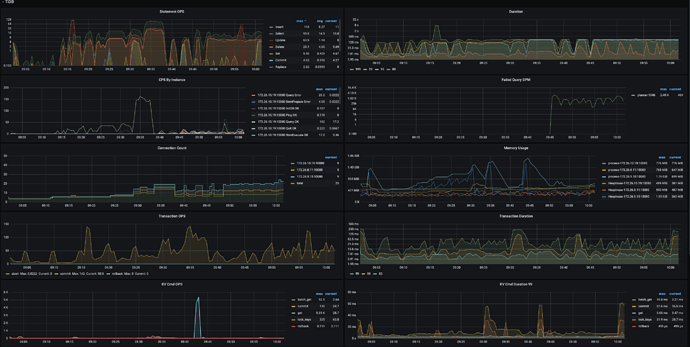

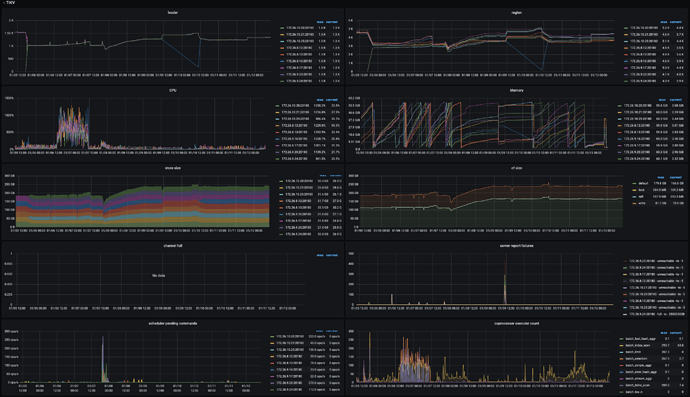

- TiKV Grafana 监控

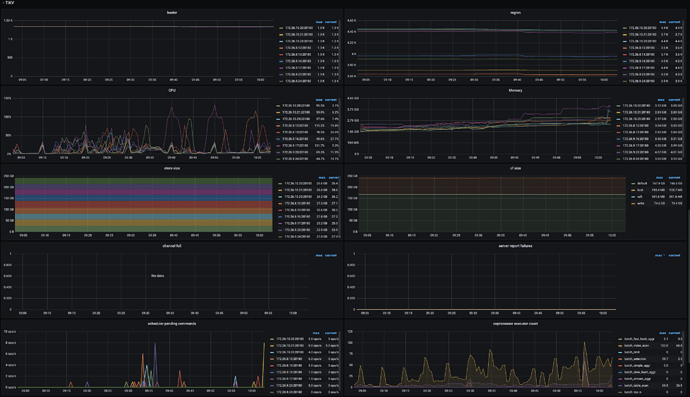

- PD Grafana 监控

- 对应模块日志(包含问题前后 1 小时日志)

若提问为性能优化、故障排查类问题,请下载脚本运行。终端输出的打印结果,请务必全选并复制粘贴上传。